LLaMA vs ChatGPT: Which AI Model Fits Your Needs in 2026?

You chose an AI model, integrated it into your workflow, and then hit a wall either the API bill arrived, or your data left your server. That frustration is real, and it is driving thousands of developers and businesses to compare LLaMA and ChatGPT side by side before committing.

LLaMA, developed by Meta AI, and ChatGPT, developed by OpenAI, represent 2 fundamentally different approaches to large language model deployment. One is open-source and self-hostable; the other is a commercial API product with managed infrastructure. The right choice depends on 5 core factors: cost structure, privacy requirements, performance benchmarks, coding capabilities, and deployment flexibility.

What Is LLaMA?

LLaMA is an open-source large language model series developed by Meta AI, with model weights released publicly for research and commercial use under the Llama Community License. The LLaMA 3.1 series includes 3 model sizes 8B, 70B, and 405B parameters released in July 2024.

Meta trained LLaMA 3.1 405B on over 15 trillion tokens of publicly available data. The 405B variant achieves performance comparable to GPT-4o on several benchmarks, including MMLU (88.6% vs GPT-4o’s 88.7%) and HumanEval coding tasks, according to Meta’s technical report.

LLaMA runs locally on consumer hardware at the 8B scale, on enterprise GPU clusters at the 70B scale, and on multi-GPU server infrastructure at the 405B scale. Organizations including IBM, Microsoft Azure, and Amazon AWS have integrated LLaMA 3.1 into their cloud platforms.

What Is ChatGPT?

ChatGPT is a commercial conversational AI product built by OpenAI, running on the GPT-4o model as of 2025. OpenAI launched ChatGPT in November 2022 and has since reached over 180 million weekly active users, according to OpenAI’s 2024 revenue disclosures.

GPT-4o processes text, images, audio, and documents within a single multimodal architecture. OpenAI provides ChatGPT through 3 access tiers: the free tier (GPT-4o mini), ChatGPT Plus at $20/month (GPT-4o), and ChatGPT Team/Enterprise for organizational deployments.

The GPT-4o API charges $5 per million input tokens and $15 per million output tokens. ChatGPT connects to the internet via Bing Search integration and supports plugins and GPTs (custom AI assistants built on the GPT-4o architecture).

LLaMA vs ChatGPT: 6 Key Differences

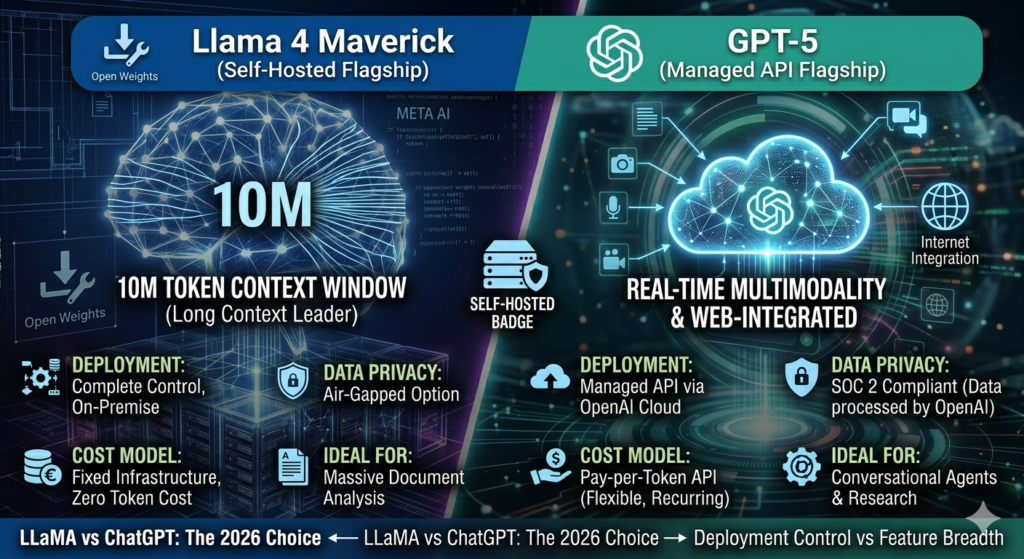

The 6 most significant differences between LLaMA and ChatGPT are cost model, data privacy, deployment control, multimodal capability, context window size, and fine-tuning access.

| Feature | LLaMA 3.1 (405B) | ChatGPT (GPT-4o) |

| Developer | Meta AI | OpenAI |

| License | Open-source (Llama Community License) | Proprietary |

| Cost | Infrastructure cost only | $5–$15 per million tokens (API) |

| Deployment | Self-hosted or cloud | API / web interface only |

| Context Window | 128K tokens | 128K tokens |

| Multimodal | Text only (base) | Text, image, audio, documents |

| Fine-tuning | Full access to weights | Limited fine-tuning via API |

| Data Privacy | Complete (on-premise option) | Data processed by OpenAI servers |

Which Model Performs Better on Benchmarks?

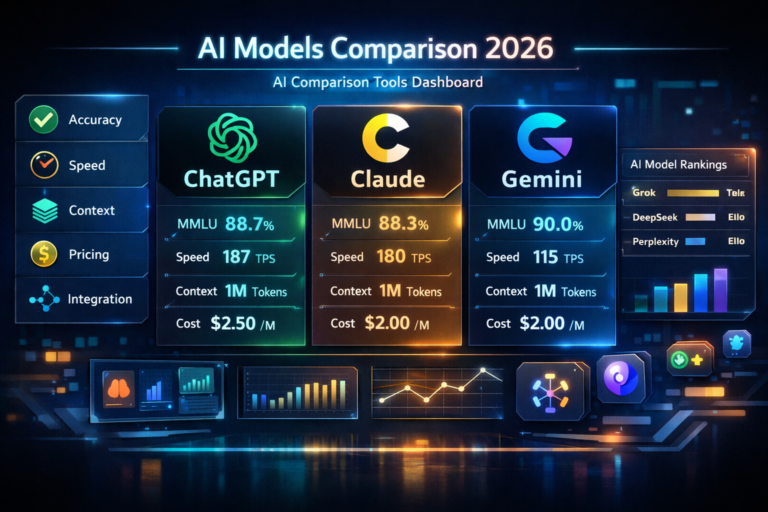

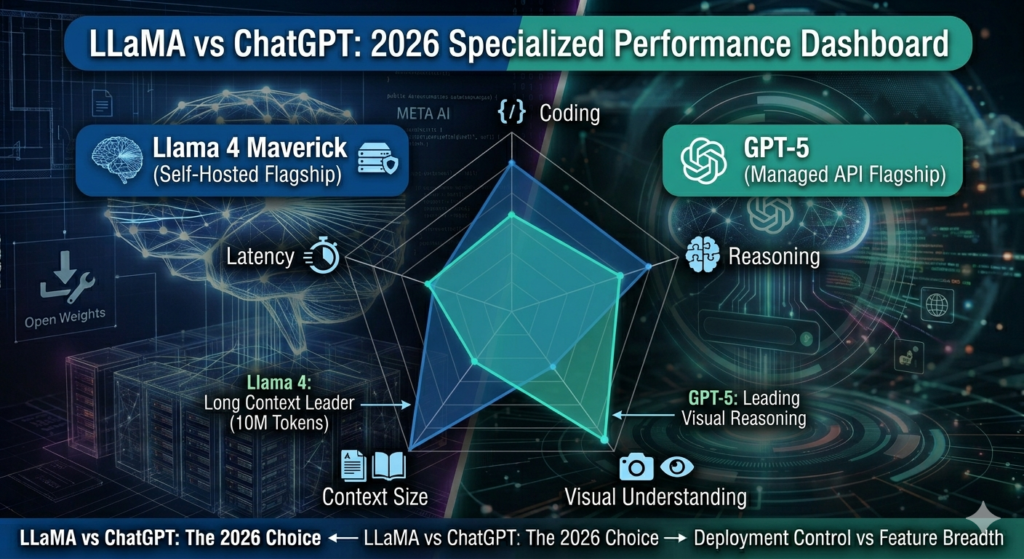

LLaMA 3.1 405B and GPT-4o perform at comparable levels on standard NLP benchmarks, with GPT-4o maintaining a measurable lead on multimodal and reasoning tasks.

On the MMLU benchmark (measuring knowledge across 57 academic subjects), LLaMA 3.1 405B scores 88.6% versus GPT-4o’s 88.7%. On HumanEval (measuring Python code generation), LLaMA 3.1 405B scores 89.0% versus GPT-4o’s 90.2%.

GPT-4o leads on 3 specific task categories: visual reasoning, real-time web access, and complex instruction following with tools. LLaMA leads on 2 categories: total cost at scale and privacy-sensitive deployments where data cannot leave the organization’s infrastructure.

Benchmark Note: According to Meta’s LLaMA 3.1 technical report (July 2024), the 405B model matches or exceeds GPT-4 performance on 24 of 30 evaluated benchmarks, while GPT-4o scores higher on vision-language tasks where LLaMA’s base model has no image input capability.

LLaMA vs ChatGPT for Coding

Both LLaMA 3.1 and GPT-4o generate functional code across multiple programming languages, including Python, JavaScript, TypeScript, Go, and Rust.

GPT-4o coding strengths include:

- Generates multi-file project structures with a single prompt

- Integrates with GitHub Copilot infrastructure via OpenAI API

- Interprets code screenshots and error images directly

- Connects to Code Interpreter for live code execution

LLaMA 3.1 coding strengths include:

- Runs inside private CI/CD pipelines without data leaving the environment

- Supports full fine-tuning on proprietary codebases

- Operates at zero marginal API cost for high-volume code generation tasks

- Integrates with Ollama, LM Studio, and vLLM for local deployment

Developers building internal tools with sensitive codebases benefit from LLaMA’s on-premise deployment. Teams requiring multimodal debugging (reading error screenshots, UI designs) benefit from GPT-4o’s vision input capability.

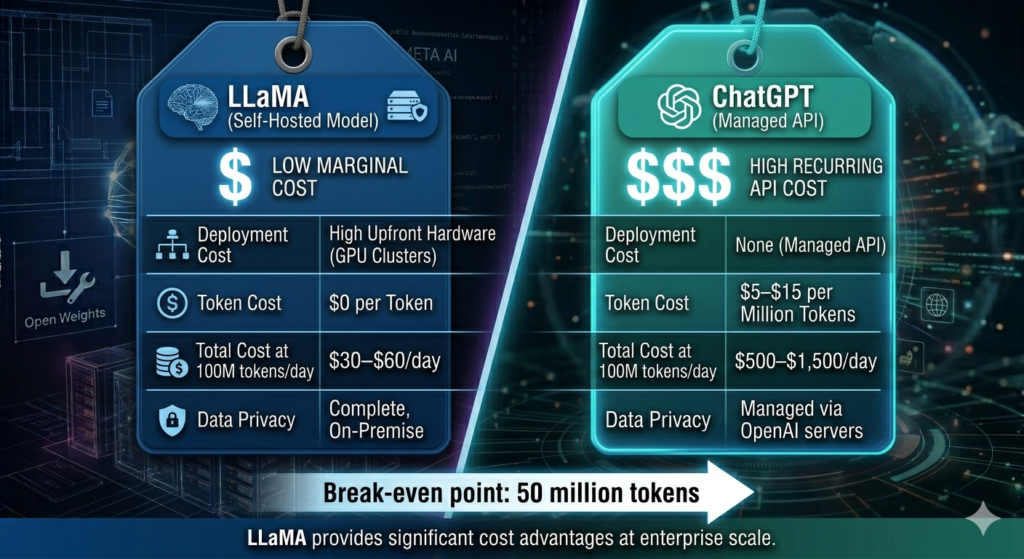

LLaMA vs ChatGPT: Cost Comparison

LLaMA operates at infrastructure cost only, while ChatGPT charges per token via OpenAI’s API.

| Cost Scenario | GPT-4o (ChatGPT API) | LLaMA 3.1 70B (Self-Hosted) | Savings with LLaMA |

| Price per million tokens | $5–$15 | $0 (API cost) | 100% API cost eliminated |

| 100M tokens/day | $500–$1,500/day | $30–$60/day (AWS p4d.24xlarge) | ~$470–$1,440/day |

| 1B tokens/month | $5,000–$15,000/month | $900–$1,800/month | ~$4,100–$13,200/month |

| Individual user (Plus) | $20/month (ChatGPT Plus) | GPU server required | ChatGPT wins at this scale |

| Hardware requirement | None (managed API) | 4× A100 GPU server | — |

| Infrastructure managed by | OpenAI | Your team | — |

At 100 million tokens per day, LLaMA 3.1 70B on AWS p4d.24xlarge on-demand pricing costs $30–$60/day versus GPT-4o’s $500–$1,500/day a cost reduction of up to 96%. At 1 billion tokens per month, the LLaMA infrastructure cost of $900–$1,800 represents a saving of up to $13,200 against GPT-4o API pricing. The cost advantage of LLaMA compounds at scale.

ChatGPT Plus at $20/month remains cost-effective for individual users who require web browsing, image generation via DALL-E 3, and document analysis without managing infrastructure.

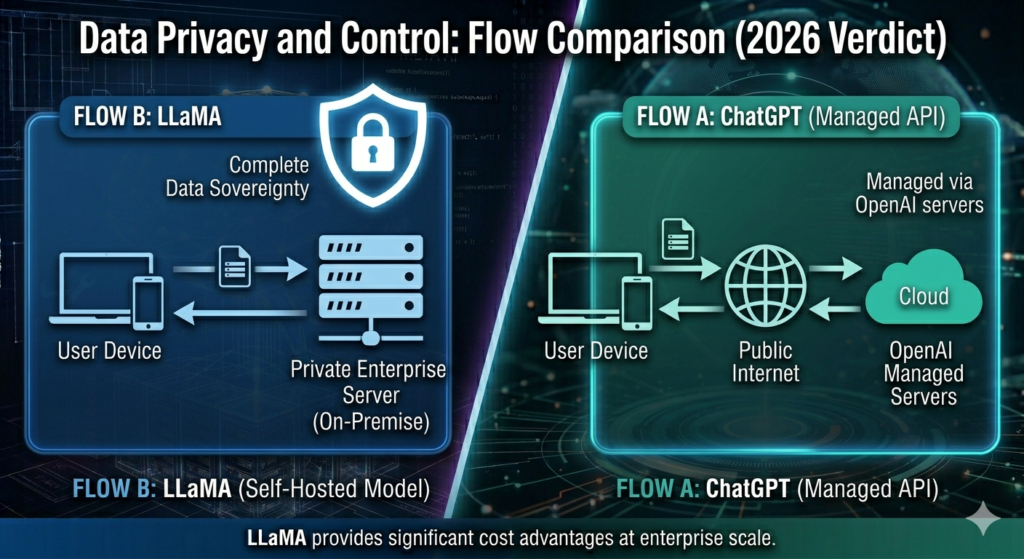

LLaMA vs ChatGPT for Privacy and Data Control

LLaMA provides complete data sovereignty through on-premise and private cloud deployment, while ChatGPT processes all inputs through OpenAI’s servers.

Organizations operating under HIPAA (healthcare), GDPR (European data protection), or SOC 2 compliance frameworks face data residency requirements that restrict sending sensitive content to third-party APIs. LLaMA 3.1 deployed on private AWS, Azure, or Google Cloud instances satisfies these requirements.

OpenAI offers enterprise agreements with data processing addendums and zero data retention options. ChatGPT Enterprise includes a guarantee that OpenAI does not train on enterprise customer data. However, the fundamental architecture still routes data through OpenAI’s infrastructure.

Healthcare organizations like Mayo Clinic and financial institutions including JPMorgan Chase have explored LLaMA deployments specifically for this reason processing patient and financial data without external API exposure.

LLaMA vs ChatGPT for Content Writing

Both models generate high-quality long-form content, but the 2 models differ on 3 important dimensions: real-time information access, consistency at scale, and fine-tuning capability for brand voice.

ChatGPT with web browsing accesses current events, competitor pricing, and recent publications. LLaMA 3.1’s knowledge cutoff is April 2024, and the base model has no internet access, though integrations like Perplexity AI and LlamaIndex connect it to external retrieval systems.

Content teams requiring high-volume, brand-consistent output benefit from fine-tuning LLaMA on proprietary content corpora. Marketing agencies requiring research-backed, real-time content benefit from ChatGPT’s browsing and plugin ecosystem.

LLaMA vs ChatGPT: Multimodal Capabilities

GPT-4o processes text, images, audio, and document inputs within a single model. LLaMA 3.1 (base) processes text only, though Meta released LLaMA 3.2 Vision models (11B and 90B) in September 2024 with image understanding capability.

GPT-4o accepts JPEG, PNG, GIF, and WebP images up to 20MB and analyzes charts, diagrams, handwritten notes, and UI screenshots. This capability supports 4 high-value use cases: visual QA systems, document digitization, UI design analysis, and medical image captioning.

LLaMA 3.2 Vision (90B) achieves competitive scores on visual benchmarks, scoring 78.1% on MMMU (Massive Multi-discipline Multimodal Understanding) versus GPT-4o’s 69.1%, according to Meta’s LLaMA 3.2 release blog. This makes LLaMA 3.2 Vision the higher-performing open-source alternative for organizations requiring visual AI without API dependency.

Who Uses LLaMA vs ChatGPT?

LLaMA adoption spans 4 primary user categories:

- Enterprise engineering teams building internal AI products

- AI researchers fine-tuning foundation models on domain-specific data

- Startups reducing infrastructure costs at high inference volumes

- Government and defense organizations with strict data sovereignty requirements

ChatGPT adoption spans 4 primary user categories:

- Individual knowledge workers requiring a polished, all-in-one AI assistant

- Small businesses using GPT-4o via ChatGPT Plus for writing and research

- Developers building GPT-powered apps through OpenAI’s API

- Educators and students using ChatGPT’s web interface for learning support

FAQ LLaMA vs ChatGPT

Is LLaMA better than ChatGPT?

LLaMA 3.1 405B matches GPT-4o on text benchmarks but lacks GPT-4o’s native multimodal input, web browsing, and managed API infrastructure.

Can LLaMA replace ChatGPT?

LLaMA replaces ChatGPT in text-only, privacy-sensitive, and cost-sensitive deployments, but not in workflows requiring image input, real-time web access, or a managed consumer interface.

Is LLaMA free to use?

LLaMA model weights are free to download under Meta’s Llama Community License. Deployment costs depend on hosting infrastructure, not licensing fees.

What is the context window of LLaMA 3.1?

LLaMA 3.1 supports a 128,000-token context window across all 3 model sizes (8B, 70B, 405B).

Final Decision: Choose LLaMA or ChatGPT

Choose LLaMA 3.1 if:

- Data privacy and on-premise deployment are required

- High-volume inference costs make API pricing prohibitive

- Fine-tuning on proprietary datasets is part of the workflow

- Text-only tasks are the primary use case

Choose ChatGPT (GPT-4o) if:

- Multimodal inputs (images, audio, documents) are part of daily tasks

- Real-time web access and up-to-date information are required

- Minimal infrastructure management is a priority

- A consumer-friendly interface is needed for non-technical users

Both LLaMA and ChatGPT represent capable, production-grade language model systems. The distinction is not one of quality alone it is one of deployment model, cost structure, and organizational control. Organizations with technical teams and data compliance requirements benefit from LLaMA. Organizations prioritizing feature breadth and ease of use benefit from ChatGPT.

For more head-to-head comparisons, read Claude vs ChatGPT,Gemini vs ChatGPT, and explore the full list of ChatGPT alternatives.