ChatGPT vs Mistral AI: Performance, Pricing, and Use-Case Comparison (2026)

ChatGPT and Mistral AI differ across 5 core dimensions: performance, pricing, coding, privacy, and ecosystem depth. ChatGPT, developed by OpenAI, delivers a closed-source multimodal platform built on GPT-5 and GPT-4o models with 1,000+ plugin integrations. Mistral AI, a French AI company, develops open-weight large language models including Mistral Large 3, Mixtral 8x7B, and Codestral, optimized for speed, GDPR compliance, and cost-efficient API deployment.

| Dimension | ChatGPT (GPT-5 Series) | Mistral AI (Mistral 3 Series) |

| Architecture | Proprietary closed-source, reasoning optimized | Open-weight, sparse Mixture-of-Experts |

| API Pricing (Input/1M tokens) | $0.63 (GPT-5) | $0.07 (Mistral Small 3.2) |

| HumanEval Coding Score | 92.4% | 92.0% (Mistral Large 3) |

| Time to First Token | 0.60 seconds | 0.30 seconds |

| Data Privacy | Cloud-based, EU residency for Enterprise | GDPR compliant, full self-hosting via Apache 2.0 |

| Ecosystem | 1,000+ plugins, Microsoft 365 native | Ollama, vLLM, NVIDIA NIM, Hugging Face |

What Is ChatGPT and What Is Mistral AI?

ChatGPT and Mistral AI represent the 2 dominant paradigms in the 2026 AI market: centralized proprietary intelligence and decentralized open-weight sovereignty. ChatGPT is OpenAI’s flagship conversational AI product. Mistral AI is a European open-weight LLM provider founded by former researchers from DeepMind and Meta.

What Is ChatGPT?

ChatGPT is OpenAI’s conversational AI product powered by GPT-5, GPT-4o, and GPT-5.4 Thinking proprietary frontier models delivered through a closed-source multimodal platform generating over $20 billion in annual recurring revenue.

GPT-5.4 features a 1.1 million token context window, processing entire codebases or multi-thousand-page documents in a single prompt. ChatGPT processes text, high-fidelity images, audio, and voice natively without external tools.

OpenAI controls all model weights under a closed-source license. Enterprise users access EU data residency and contractual data isolation, where inputs are never used for model training. ChatGPT integrates natively with productivity platforms including Microsoft 365, Salesforce, and Zapier.

What Is Mistral AI?

Mistral AI is a French AI company developing open-weight LLMs including Mistral Large 3, Mixtral 8x7B, Codestral, and Ministral licensed under Apache 2.0 for full local deployment on private infrastructure.

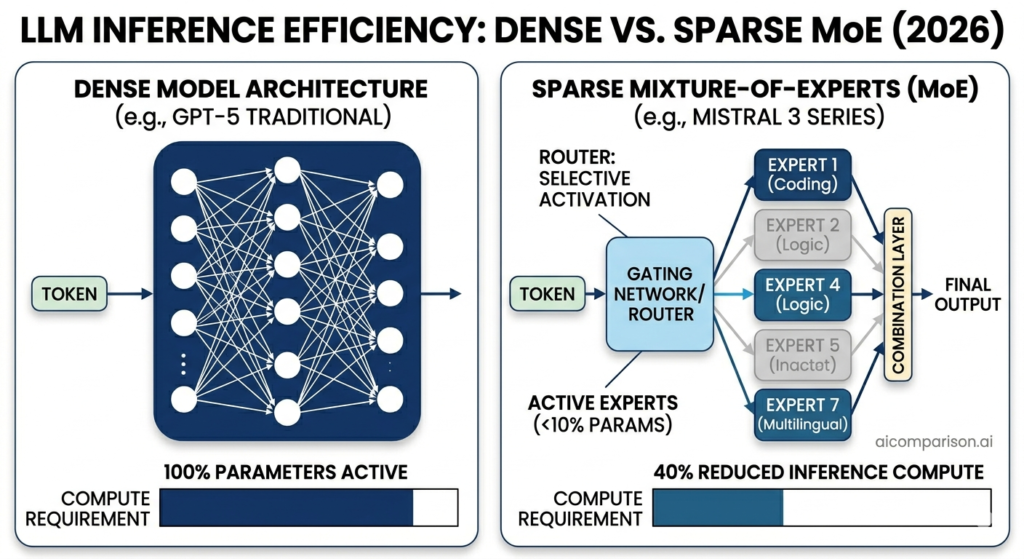

Mistral Large 3 activates 41 billion parameters per token from 675 billion total parameters using sparse Mixture-of-Experts architecture, reducing compute requirements by 40% while matching the knowledge density of much larger dense systems.

As a European entity operating under French jurisdiction, Mistral AI complies with GDPR across all subscription tiers by default and hosts all commercial services in European data centers. Le Chat, Mistral’s collaborative AI workspace, competes directly with the ChatGPT interface at $14.99 per month for the Pro tier 25% cheaper than ChatGPT Plus.

ChatGPT vs Mistral AI: Core Feature Comparison

ChatGPT offers a managed cloud productivity environment with 1,000+ plugins, while Mistral AI delivers open-weight architecture with self-hosting, local deployment, and Mistral Forge for custom model training.

| Feature Dimension | ChatGPT (GPT-5 / GPT-5.4) | Mistral AI (Mistral 3 Family) |

| Logic Processing | GPT-5 Thinking (Extended Chain-of-Thought) | Magistral (Transparent Reasoner, 40+ languages) |

| Customization | Custom GPTs, Fine-Tuning API | Mistral Forge (train from scratch on private data) |

| Multimodality | DALL-E 3, SearchGPT, Voice, Vision | Native image understanding across all variants |

| Deployment | Managed Cloud (OpenAI / Azure) | On-premise, edge, private VPC, and cloud |

| Agentic Tools | Agents SDK, Swarm integration | Native function calling, JSON mode, FIM operations |

What Features Does ChatGPT Offer?

ChatGPT delivers 5 advanced productivity features enabling professional workflows across content creation, software development, and business automation.

- Integrates DALL-E 3 for text-to-image generation and image editing, achieving a score of 1,512 on the Image Edit leaderboard.

- Executes Code Interpreter (Python Tools) with 100% accuracy on AIME 2025 mathematics, generating and running code in a sandboxed environment.

- Retains persistent memory across long-term interactions, storing user preferences, project details, and contextual history across every session.

- Supports 1,000+ plugin integrations including Custom GPTs HR automation, marketing workflows, and legal analysis tools recording a 19x increase in weekly usage.

- Processes multimodal inputs natively via GPT-5.4, handling text, images, audio, and real-time voice for live translation and voice-driven coordination without external tools.

For a detailed breakdown of GPT model variants, read the OpenAI Models Guide.

What Features Does Mistral AI Offer?

Mistral AI delivers 5 core features centered on architectural transparency, deployment flexibility, and enterprise data sovereignty.

- Provides open-weight architecture via Apache 2.0 licensing, allowing developers to inspect model weights, optimize inference settings, and deploy on any hardware without vendor lock-in.

- Delivers Le Chat a web portal with native image understanding across every model variant from Ministral 3B to Mistral Large 3, available at $14.99 per month for the Pro tier.

- Achieves Mixture-of-Experts efficiency, routing queries to only the most relevant parameters and delivering 40% lower latency and 3x the throughput compared to dense models of equivalent quality.

- Supports local deployment via Ollama, vLLM, and NVIDIA NIM, ensuring data never leaves a company’s private network and satisfying the EU AI Act’s strictest requirements for high-risk systems.

- Offers Mistral Forge, launched in March 2026, enabling enterprises to train models from scratch on proprietary data encoding institutional knowledge at the foundational model level.

ChatGPT vs Mistral AI: Benchmark Performance Comparison

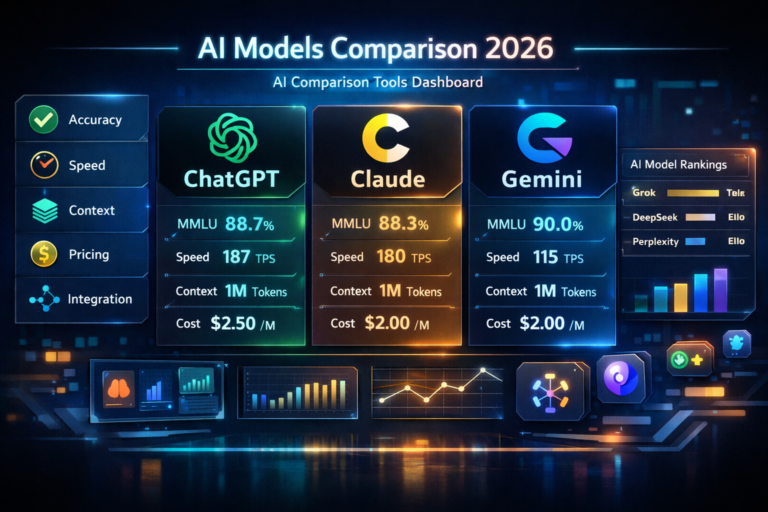

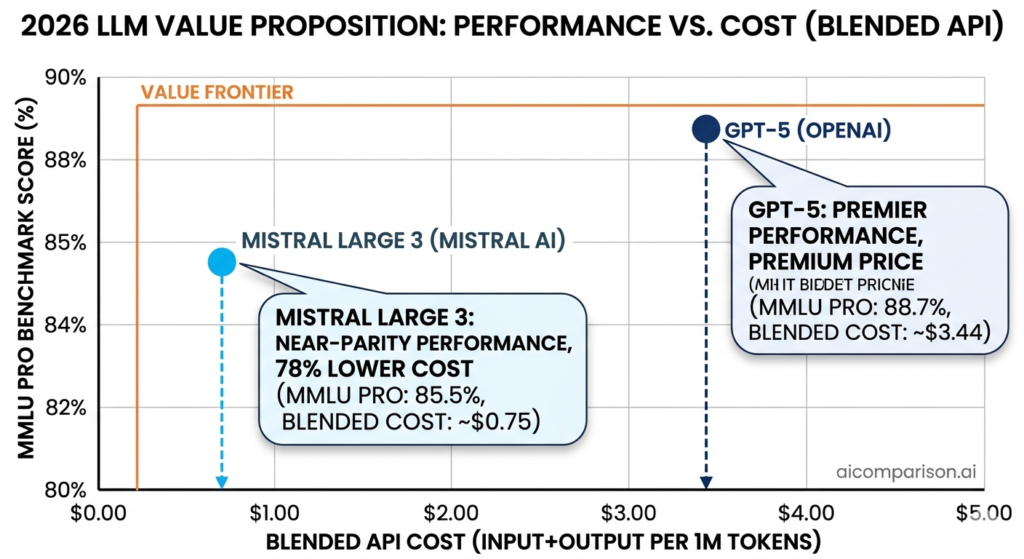

ChatGPT leads with 88.7% MMLU Pro and 89.4% GPQA Diamond. Mistral Large 3 reaches 85.5% MMLU Pro at 78% lower blended API cost ranked #2 non-reasoning open-source model on the Hugging Face Open LLM Leaderboard.

| Benchmark | GPT-5 / GPT-5.4 Pro | Mistral Large 3 |

| MMLU Pro (Multi-task) | 88.7% | 85.5% |

| AIME 2025 (Math) | 100% (with Python tools) | 87.1% |

| GPQA Diamond (Reasoning) | 89.4% | 43.9% |

| HumanEval (Coding) | 92.4% | 92.0% |

| SWE-Bench (Agentic Coding) | 74.9% | 65.4% |

How Does Mistral 7B Perform Against GPT-4o on MMLU Benchmarks?

Mistral 7B scores 64.1% on MMLU, compared to GPT-4o’s 88.7% a 24.6 percentage point gap on the Hugging Face Open LLM Leaderboard multi-task language understanding benchmark.

The more relevant 2026 comparison positions Mistral Large 3 at 85.5% against GPT-5 at 88.7% a gap of only 3.2 percentage points. The MMLU benchmark evaluates performance across 57 academic subjects including mathematics, history, law, and medicine.

Mistral Large 3 ranks #2 globally among non-reasoning open-source models on LMArena leaderboard data, demonstrating near-parity in knowledge density at 78% lower blended API cost than GPT-5.

How Does Mixtral 8x7B Compare to GPT-4 on Reasoning Tasks?

Mixtral 8x7B scores 70.6% on reasoning benchmarks, compared to GPT-4’s 82%, an 11.4 percentage point gap reflecting the architectural trade-off between MoE efficiency and dense reasoning depth.

Mixtral 8x7B uses sparse activation, engaging only the most relevant expert parameters per query and reducing computation by 40% compared to dense models of equivalent size. GPT-4 and GPT-5 use chain-of-thought Thinking mode, boosting expert-level scores above 90% on GPQA benchmarks.

Mistral’s 2026 reasoning model, Magistral, closes this gap using GRPO (Group Relative Policy Optimization), maintaining high-fidelity reasoning across 40+ languages including French, German, Arabic, and Japanese. Mistral Large 3’s GPQA Diamond score of 43.9% compared to GPT-5’s 89.4% confirms OpenAI maintains a significant lead in PhD-level scientific reasoning tasks.

Which AI Model Has Lower Response Latency?

Mistral Large 3 delivers a Time to First Token of 300ms 2x faster than GPT-5.2’s 600ms making it the superior choice for real-time applications including live customer support, in-editor coding assistants, and interactive voice systems.

| Performance Metric | GPT-5.2 | Mistral Large 3 |

| Time to First Token (TTFT) | 0.60 seconds | 0.30 seconds |

| Per-Token Latency | 0.020 seconds | 0.025 seconds |

| Throughput | 74–100 tokens/sec | 31.9–48 tokens/sec |

| Edge Performance | N/A (cloud only) | 85.0 t/s (Ministral 3B) |

GPT-5.2 generates long-form content faster once generation commences producing tokens at 20ms versus Mistral’s 25ms. Mistral 3B sets a 2026 edge performance record at 0.44 seconds TTFT on standard laptop hardware, according to Ollama’s published benchmarks.

ChatGPT vs Mistral AI for Coding

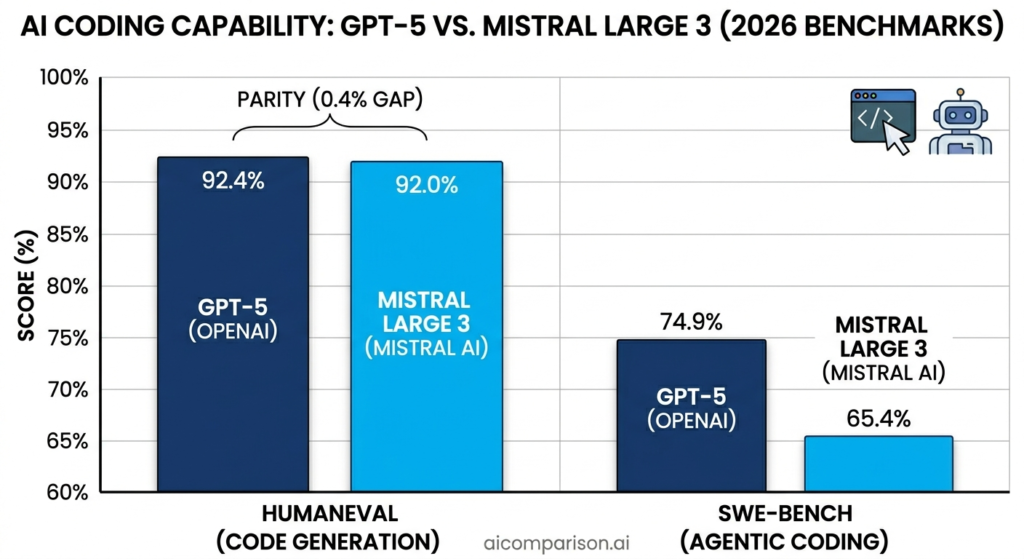

ChatGPT and Mistral AI deliver near-identical HumanEval scores 92.4% for GPT-5 and 92.0% for Mistral Large 3 but differ in deployment model, context window size, and specialized coding capabilities. For a comparison of AI coding environments, read Cursor vs Copilot.

How Does ChatGPT Perform on Code Generation Tasks?

ChatGPT achieves 92.4% on HumanEval and 74.9% on SWE-Bench, the highest agentic coding score among publicly evaluated models using GPT-5-Codex and Code Interpreter for Python, JavaScript, and C++ generation.

Code Interpreter executes code in a sandboxed environment, enabling real-time debugging, data analysis, and automated test generation within a single interface. On SWE-Bench Verified, which measures autonomous resolution of real-world GitHub issues, GPT-5 achieves 74.9% the leading score among all evaluated models. ChatGPT’s agentic workflow orchestrates multiple context windows simultaneously, making project-scale tasks involving spreadsheets, presentations, and multi-file codebases executable in a single session.

How Does Mistral AI Perform on Code Generation Tasks?

Mistral AI’s Codestral scores 81.1% on HumanEval, while Mistral Large 3 achieves 92.0% near parity with GPT-5 with a 256K context window enabling entire repository ingestion in a single prompt.

Codestral and Devstral 2 are Mistral’s specialized coding models built for high-throughput development workflows. Codestral supports Fill-in-the-Middle (FIM) operations, understanding code context both before and after the cursor position functioning as an intelligent IDE auto-complete engine within editors including VS Code and JetBrains.

Mistral’s training corpus covers 80+ programming languages natively, achieving high proficiency in less common languages including Rust, Kotlin, and regional scripting languages where GPT-5 shows lower accuracy. Ministral 14B achieves 85.0 on AIME 2025 locally, enabling complex algorithmic reasoning without cloud dependency. For a comparison of AI developer tools, read Claude Code vs Cursor.

Which AI Is Better for Developers: ChatGPT or Mistral?

ChatGPT delivers broader ecosystem integration for complex project management. Mistral AI provides lower API costs and self-hosting capability for proprietary source code protection.

Choose ChatGPT for development workflows requiring Microsoft 365 integration, Zapier automation, multimodal debugging, and high-level agentic orchestration across long software project timelines.

Choose Mistral AI for bulk code review automation, API-heavy applications requiring 20x lower per-token cost, multilingual development across 80+ programming languages, and environments where proprietary source code must never reach external API endpoints. Mistral’s FIM support makes it the superior choice for IDE-embedded auto-complete workflows.

ChatGPT vs Mistral AI: Pricing Comparison

Mistral AI maintains a cost advantage over ChatGPT across every pricing tier in 2026 Mistral Small 3.2 costs $0.07 per million input tokens, compared to GPT-5 at $0.63, a 9x difference on input cost alone. For a broader AI tool cost comparison, read Copilot vs ChatGPT.

| Model Tier | OpenAI Input / Output (per 1M) | Mistral Input / Output (per 1M) | Context Window |

| Flagship | $0.63 / $5.00 (GPT-5) | $0.50 / $1.50 (Large 3) | 262K–400K |

| Small / Edge | $0.20 / $0.80 (GPT-4.1 mini) | $0.07 / $0.20 (Small 3.2) | 32K–131K |

| Coding | Included in GPT-5 | $0.30 / $0.90 (Codestral) | 256K |

| Pro Expert | $30.00 / $180.00 (GPT-5.4 Pro) | N/A | 1.1M |

| Consumer Plan | $20/month (ChatGPT Plus) | $14.99/month (Le Chat Pro) | — |

What Is ChatGPT’s Pricing Structure?

ChatGPT offers 4 pricing tiers: Free (GPT-4o mini), Plus at $20/month, Team, and Enterprise with API costs ranging from $0.20 to $30.00 per million input tokens depending on model tier.

GPT-5 API costs $0.63 per million input tokens and $5.00 per million output tokens. GPT-5.4 Pro features a 1.1 million token context window at $30.00 input and $180.00 output the only available option for tasks exceeding 256K token context.

OpenAI offers prompt caching at 50–90% of standard input costs for repetitive tasks including document auditing, prompt templating, and batch summarization. Reasoning models in the o-series consume 3x to 5x more tokens internally than appear in outputs, inflating API budgets for unmonitored deployments.

What Is Mistral AI’s Pricing Structure?

Mistral AI offers 3 pricing tiers: Free (Le Chat basic), Le Chat Pro at $14.99/month, and Team at $24.99 per user per month with API costs ranging from $0.07 to $0.50 per million input tokens.

Mistral Small 3.2 costs $0.07 per million input tokens and $0.20 per million output tokens, the most cost-efficient general-purpose LLM available in 2026. Mistral Large 3 costs $0.50 per million input tokens and $1.50 per million output tokens, making it 70% cheaper on output than GPT-5 at equivalent capability levels.

Codestral costs $0.30 input and $0.90 output per million tokens, optimized for high-volume developer workflows. Mistral Forge provides a separate enterprise pricing model for training custom models on proprietary data.

Which AI Is More Cost-Efficient: ChatGPT or Mistral?

Mistral AI is more cost-efficient than ChatGPT across every comparable API tier Mistral Small 3.2 costs $0.07 per million input tokens, 89% lower than GPT-5 at $0.63, and Mistral Large 3 costs 70% less on output at equivalent performance.

| Model Comparison | Input / 1M | Output / 1M | Savings vs GPT-5 |

| GPT-5 | $0.63 | $5.00 | Baseline |

| Mistral Large 3 | $0.50 | $1.50 | 21% input / 70% output |

| Mistral Small 3.2 | $0.07 | $0.20 | 89% input / 96% output |

| Le Chat Pro vs ChatGPT Plus | $14.99/mo | — | 25% cheaper |

A blended 3:1 input-to-output token cost for Mistral Large 3 equals $0.75 per million tokens, compared to GPT-5’s $3.44 a 78% cost reduction for production deployments.

Startups deploying at enterprise scale achieve 80% lower annual AI spend using Mistral API versus OpenAI’s premium tier. ChatGPT’s GPT-5.4 Pro remains the only viable option for tasks requiring context windows exceeding 256K tokens.

ChatGPT vs Mistral AI: Privacy and Data Handling

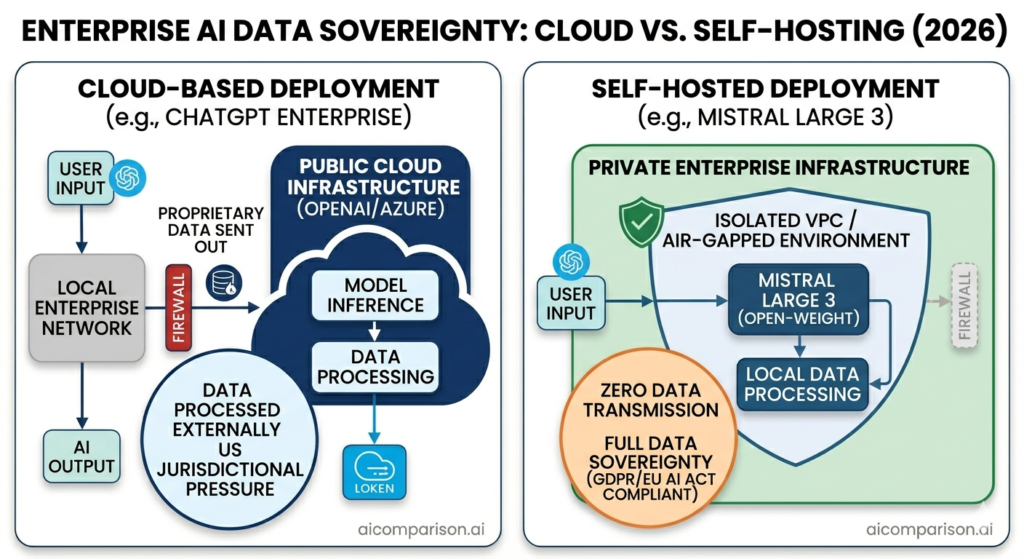

Privacy is the primary competitive differentiator in enterprise AI adoption in 2026, driven by the EU AI Act and increased corporate sensitivity around intellectual property. Mistral AI provides full data sovereignty through self-hosting. ChatGPT offers administrative isolation for Enterprise customers on US-based infrastructure.

How Does OpenAI Handle User Data in ChatGPT?

OpenAI stores ChatGPT Free and Plus user data on US-based servers with a 30-day retention policy, using it for model training unless a manual opt-out is enabled.

ChatGPT Enterprise and Team customers access EU Data Residency with contractual data isolation inputs that are never used for model training at these tiers. All ChatGPT users transmit data through OpenAI’s cloud servers regardless of subscription tier.

ChatGPT’s cloud architecture does not meet the EU AI Act’s high-risk system requirements for defense, healthcare, and government applications, as confirmed by EU AI Act Article 6 classifications.

How Does Mistral AI Handle User Data?

Mistral AI complies with GDPR across all subscription tiers by default, stores all commercial data in European data centers, and provides full self-hosting via Apache 2.0 licensed open-weight models.

Organizations download Mistral Large 3 weights under the Apache 2.0 license and deploy on internal servers using Ollama, vLLM, or NVIDIA NIM achieving zero data transmission to any third-party endpoint. This self-hosting capability satisfies the EU AI Act’s requirements for high-risk system categories including healthcare, legal, and financial services. The Magistral reasoning model provides transparent, followable reasoning chains, enabling organizations to audit AI decision-making for regulatory compliance.

Which AI Is More Private: ChatGPT or Mistral?

Mistral AI provides stronger privacy than ChatGPT through GDPR compliance by default, European data center hosting, and full self-hosting across all subscription tiers not exclusively enterprise plans.

ChatGPT offers strong administrative isolation for Enterprise users with EU Data Residency but remains a cloud-only US provider subject to US jurisdictional pressure. Mistral AI is the only path for organizations requiring EU AI Act compliance for high-risk systems, ensuring proprietary intellectual property never reaches external API endpoints. Organizations in regulated industries including healthcare, legal, defense, and financial services use Mistral’s self-hosted deployment as the standard-compliant option.

ChatGPT vs Mistral AI: Use-Case Comparison

ChatGPT and Mistral AI serve 4 distinct professional use cases with different performance profiles: content writing, business productivity, SEO workflows, and local deployment. For a broader AI assistant comparison, read Claude vs ChatGPT.

Which AI Is Better for Content Writing?

ChatGPT is better for content writing GPT-5 ranks #1 on the LMArena Creative Writing leaderboard, demonstrating superior stylistic nuance, tone adaptation, and narrative flow for long-form marketing and editorial content.

In real-world SEO content tests, ChatGPT produces structured guides with clear headings and audience-adapted tone. Mistral AI produces more concise, factual explanations suited for technical documentation and data-driven content but lacking the stylistic range for creative brand storytelling. GPT-5 is the leading tool for authors, bloggers, and creative directors requiring a model that adapts to complex, specific writing styles across multiple brand voices.

Which AI Is Better for Business Use?

ChatGPT is better for general business productivity, connecting natively with Microsoft 365, Salesforce, and Zapier to automate multi-step workflows across organizations with 1,000+ plugin integrations. For a comparison of business AI tools, read Copilot vs ChatGPT.

The 4 most decisive business advantages of Mistral AI over ChatGPT are: 89% lower API input cost, full Apache 2.0 self-hosting, GDPR compliance by default, and Mistral Forge custom model training on proprietary data. Mistral Forge enables companies including ASML and Ericsson to train models on private institutional data encoding deep organizational knowledge that general models cannot replicate. Businesses prioritizing cost-conscious scaling and data sovereignty choose Mistral AI as the long-term strategic investment.

Which AI Is Better for SEO Tasks?

ChatGPT is better for SEO strategy and content optimization, integrating natively with Surfer SEO to deliver live ranking benchmarks and NLP-powered keyword suggestions. Organizations using the ChatGPT and Surfer SEO workflow report a 140% traffic increase from reoptimizing existing pages to match AI search citation requirements. For an SEO tool comparison, read Surfer SEO vs Ahrefs.

Mistral AI is better for bulk technical SEO workflows processing thousands of product descriptions, metadata tags, and technical audits at 20x lower API cost than OpenAI. Mistral’s training corpus covers 40+ languages including French, German, Arabic, Japanese, and Mandarin, delivering higher linguistic accuracy for international SEO campaigns. The 2 primary SEO use cases where Mistral outperforms ChatGPT are bulk metadata generation and multilingual content localization.

Which AI Is Better for Local Deployment?

Mistral AI is the only viable option for local deployment OpenAI models operate exclusively on cloud infrastructure with zero offline or on-premise capability at any subscription tier.

| Deployment Tier | Recommended Mistral Model | Required Hardware |

| Edge / Mobile | Ministral 3B (~3.0 GB) | MacBook Air M3 (24GB RAM) |

| Workstation | Mistral Small 3.2 (24B) | RTX 4090 (24GB VRAM) |

| Enterprise Server | Mistral Large 3 (675B) | 8x H200 or GB200 Cluster |

| Homelab / CPU | Ministral 3B | AMD Ryzen 9000 (32GB DDR5) |

Mistral Large 3 is optimized for speculative decoding and NVFP4 compression, enabling the 675B parameter model to run on a single 8x H100 node. Ministral 3B sets a 2026 edge performance record at 0.44 seconds TTFT on standard laptop hardware per Ollama’s published benchmarks, enabling near-instant offline AI interaction for field applications including drone navigation, medical diagnostics, and industrial robotics.

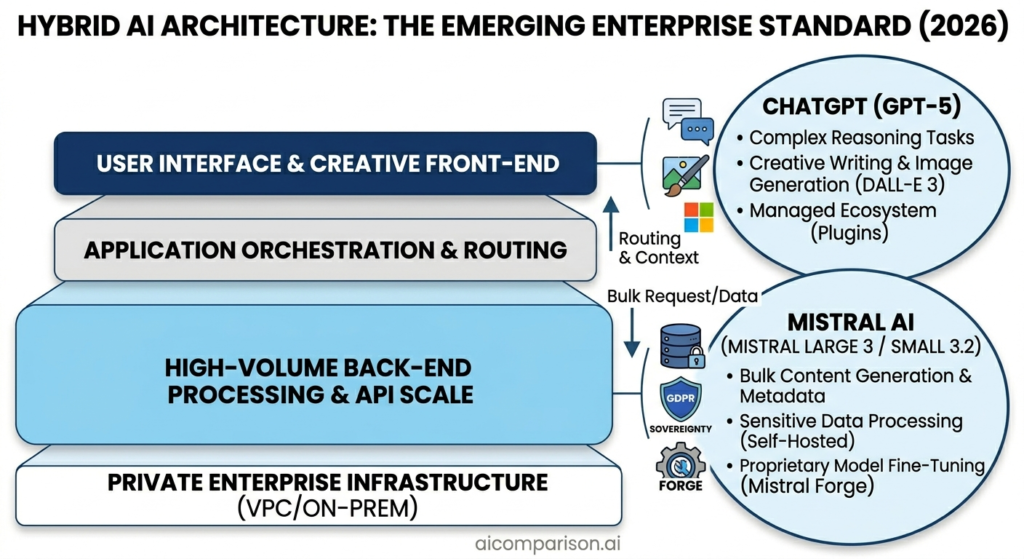

Can ChatGPT and Mistral AI Be Used Together?

ChatGPT and Mistral AI deliver maximum value in a hybrid architecture ChatGPT handles creative, conversational, and multimodal front-end tasks while Mistral API processes bulk, sensitive, and cost-intensive back-end workflows, reducing annual AI spend by 80%.

The 3 most effective hybrid deployment patterns in 2026 are:

- Executes creative and bulk split Routes ChatGPT for drafting strategies and marketing copy; processes bulk content generation, metadata, and technical audits through Mistral Small 3.2 API at $0.07 per million tokens.

- Applies sovereignty split Runs ChatGPT for general business productivity; deploys sensitive document processing, legal discovery indexing, and financial auditing through a locally hosted Codestral or Mistral Large 3 instance.

- Optimizes latency routing Deploys Mistral Large 3 for real-time customer-facing applications requiring 300ms TTFT; routes long-form document generation through GPT-5 where 100 tokens per second sustained throughput matters more than initial speed.

According to Verinext’s 2026 Hybrid AI Architecture analysis, this pattern, ChatGPT as the front-end creative interface and Mistral AI as the back-end processing engine, identifies as the emerging standard enterprise deployment model.

ChatGPT vs Mistral AI: Final Verdict

ChatGPT ranks #1 on the LMArena Creative Writing leaderboard and integrates with 1,000+ plugins including Microsoft 365 and Salesforce, making it the leading choice for general users and content creators.

Mistral AI delivers 89% lower API input cost and full Apache 2.0 self-hosting, making it the leading choice for developers and privacy-focused organizations.

Choose ChatGPT If You:

- Require the highest reasoning benchmarks GPT-5.4 Pro scores 89.4% on GPQA Diamond and 100% on AIME 2025 with Python tools, leading all available models in expert-level scientific and mathematical reasoning.

- Prioritize creative writing quality GPT-5 ranks #1 on the LMArena Creative Writing leaderboard, producing superior stylistic nuance for marketing, editorial, and brand content.

- Need ecosystem integration ChatGPT connects with 1,000+ plugins including Microsoft 365, Salesforce, DALL-E 3, and Zapier, functioning as a unified productivity layer.

- Value managed infrastructure ChatGPT handles all infrastructure, model updates, and scaling automatically with zero technical configuration at the user level.

For users evaluating alternatives, read ChatGPT Alternatives.

Choose Mistral AI If You:

- Require data sovereignty Mistral AI provides GDPR compliance by default, European data center hosting, and Apache 2.0 self-hosting the only path to EU AI Act compliance for high-risk system categories.

- Deploy at API scale Mistral Small 3.2 at $0.07 per million input tokens delivers 89% cost savings over GPT-5, enabling enterprise-scale AI at startup budgets.

- Need edge and offline performance Ministral 3B runs at 85.0 tokens per second on edge hardware, enabling AI deployment on smartphones, drones, and industrial robots without cloud connectivity.

- Build custom institutional intelligence Mistral Forge enables organizations to train models from scratch on proprietary data, encoding competitive institutional knowledge at the foundational model level.

For users evaluating open-weight AI alternatives, read Claude Alternatives.