Claude vs ChatGPT vs Gemini (2026): Which AI Model Performs Best?

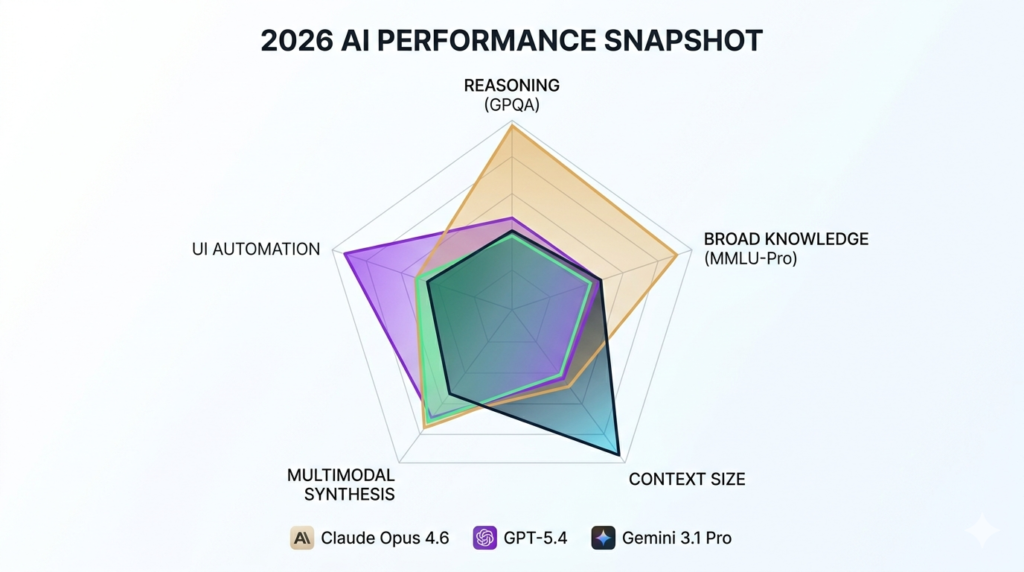

Anthropic’s Claude Opus 4.6, OpenAI’s GPT-5.4, and Google’s Gemini 3.1 Pro are the 3 dominant AI models in 2026. Claude leads in reasoning precision and coding accuracy. ChatGPT leads in versatile task execution and UI automation. Gemini leads in multimodal research and context window capacity.

These 3 models differ across 8 core performance dimensions: reasoning accuracy, coding ability, writing quality, hallucination rate, context window size, multimodal capability, AI safety approach, and subscription pricing.

What Are the Differences Between Claude, ChatGPT, and Gemini?

Claude, ChatGPT, and Gemini differ in 3 core architectural philosophies: reasoning depth, execution speed, and data retrieval breadth.

Claude Opus 4.6 applies Reason-Based Alignment a Constitutional AI framework that prioritizes ethical self-critique. GPT-5.4 functions as a generalist operating layer with native computer use at 75% OSWorld benchmark. Gemini 3.1 Pro processes text, image, audio, and video natively with a 2-million-token context window.

| Model | Developer | Core Strength | Best For | Pro Pricing |

| Claude Opus 4.6 | Anthropic | Reasoning + Coding | Developers, Legal, Finance | $20/month |

| GPT-5.4 | OpenAI | Versatility + Computer Use | Writers, General Users | $20/month |

| Gemini 3.1 Pro | Google DeepMind | Research + Multimodal | Analysts, Researchers | $19.99/month |

The 3 primary differences between Claude, ChatGPT, and Gemini are:

- Reasoning approach Claude applies Constitutional AI self-critique, ChatGPT uses Reinforcement Learning from Human Feedback (RLHF), and Gemini applies Google’s Responsible AI Principles

- Execution capability ChatGPT leads in UI automation at 75% OSWorld benchmark, Claude leads in code architecture at 80.8% SWE-bench Verified, and Gemini leads in multimodal data synthesis at 78.2% Video-MME

- Context capacity Gemini supports 2 million tokens, Claude supports 1 million tokens, and GPT-5.4 supports 128,000–512,000 tokens

For a detailed two-model breakdown, read Claude vs ChatGPT and Claude vs Gemini.

How Does Claude Compare to ChatGPT and Gemini in 2026?

Claude Opus 4.6 leads GPQA Diamond scientific reasoning at 87.4%–94.1%. GPT-5.4 leads MMLU-Pro broad knowledge at 92.3%. Gemini 3.1 Pro leads ARC-AGI-2 abstract reasoning at 77.1%.

| Benchmark | Claude Opus 4.6 | GPT-5.4 | Gemini 3.1 Pro |

| GPQA Diamond | 87.4%–94.1% | 83.9%–92.8% | 82.1%–94.3% |

| MMLU-Pro | 91.7% | 92.3% | 90.8% |

| HumanEval+ | 94.6% | 95.1% | 92.3% |

| ARC-AGI-2 | 68.8% | 73.3% | 77.1% |

| SWE-bench Verified | 80.8% | 80.1% | 68.3% |

For a full benchmark breakdown across model generations, see Gemini 2.5 Pro vs Claude 3.7 Sonnet.

Who Develops Claude, ChatGPT, and Gemini?

Claude, ChatGPT, and Gemini are developed by 3 organizations with distinct AI safety philosophies.

| Organization | Founded | HQ | AI Safety Approach |

| Anthropic | 2021 | San Francisco, CA | Constitutional AI (RLAIF) |

| OpenAI | 2015 | San Francisco, CA | Reinforcement Learning from Human Feedback (RLHF) |

| Google DeepMind | 2023 (merged) | Mountain View, CA | Responsible AI Principles + Facts Grounding |

Anthropic operates as a Public Benefit Corporation founded by former OpenAI researchers. OpenAI operates as a Capped-Profit organization with over 900 million weekly active users. Google DeepMind leverages custom TPU hardware and the world’s largest proprietary datasets.

Claude vs ChatGPT vs Gemini for Writing: Which AI Produces Better Content?

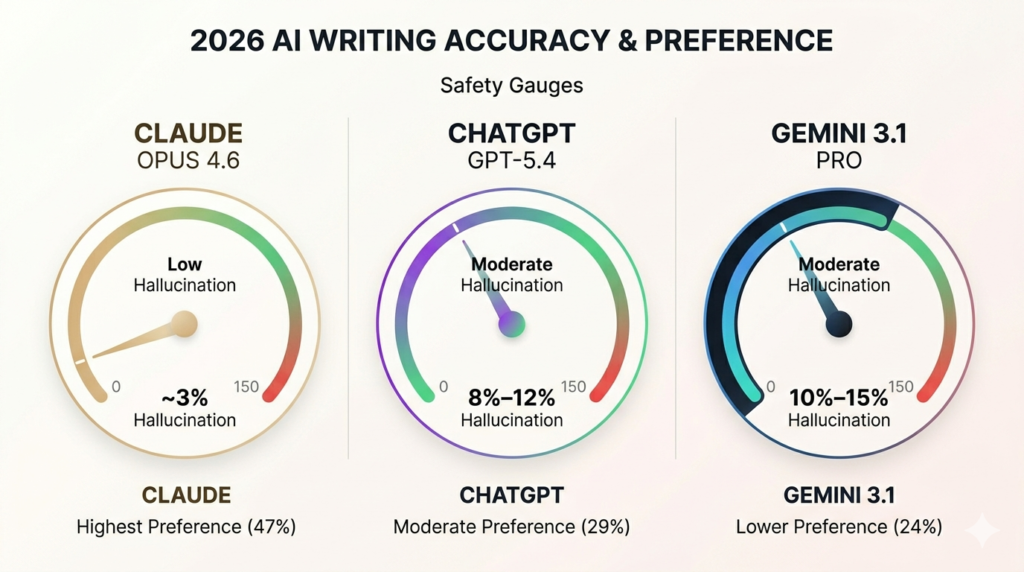

Claude Opus 4.6 produces the most accurate long-form content, ChatGPT GPT-5.4 generates the most precise marketing copy, and Gemini 3.1 Pro delivers the most research-grounded content.

Blind human evaluations confirm Claude receives 47% preference in writing quality tests, ChatGPT receives 29%, and Gemini receives 24%, per independent 2026 content evaluation studies.

| Model | Best Writing Use Case | Tone Accuracy | Hallucination Rate |

| Claude Opus 4.6 | Long-form, SEO, Legal | High maintains subtext | ~3% |

| GPT-5.4 | Marketing copy, Outlines | Moderate needs prompting | 8%–12% |

| Gemini 3.1 Pro | Research reports, Summaries | Low skews academic | 10%–15% |

For AI writing tool comparisons beyond the Big 3, read Jasper AI vs ChatGPT or Jasper vs Copy AI.

Which AI Model Generates the Most Accurate Long-Form Content?

Claude Opus 4.6 generates the most accurate long-form content with a 1-million-token context window and ~3% hallucination rate across documents exceeding 5,000 words.

The 3 long-form content differences between Claude, ChatGPT, and Gemini are:

- Synthesis fidelity Claude draws precise inter-document connections across 120,000-token multi-source inputs, GPT-5.4 misses nuanced cross-document relationships at extended lengths, and Gemini retrieves raw volume with 84.9% grounding score

- Transcript summarization Claude preserves tone and logical nuance, ChatGPT extracts action items efficiently, and Gemini processes the highest volume with native audio transcription

- API cost for long-form Gemini costs $2.00 per 1 million input tokens, GPT-5.4 costs $12.00, and Claude Opus 4.6 costs $15.00

Is Claude or ChatGPT Better for Blog Writing and SEO Content?

Claude Opus 4.6 produces more tonally consistent blog content. ChatGPT GPT-5.4 produces more precise SEO-structured metadata and schema markup.

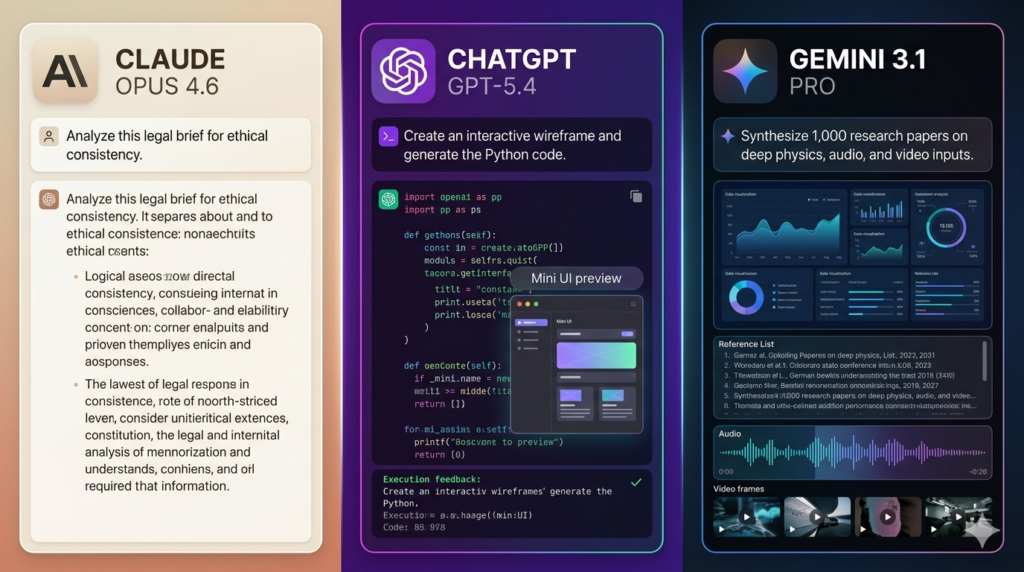

Real prompt test “Write an SEO blog introduction on AI tools 2026”:

- Claude delivered structured H2/H3 outlines with natural sentence rhythm and zero corporate filler language

- ChatGPT delivered precise metadata, schema suggestions, and keyword-density guidance with rule-based accuracy

- Gemini delivered research-grounded content with real-time citations but academic tone requiring additional prompting

Claude scores 83.3% on the IFEval instruction-following benchmark. The most effective 2026 SEO workflow uses Gemini for research, ChatGPT for metadata and structure, and Claude for the natural-language draft.

Claude vs ChatGPT vs Gemini for Coding: Which AI Writes Better Code?

Claude Opus 4.6 leads in code architecture at 80.8% SWE-bench Verified, ChatGPT GPT-5.4 leads in terminal execution at 75.1% Terminal-Bench 2.0, and Gemini 3.1 Pro leads in large codebase context at 1–2 million tokens.

The 5 coding use cases where Claude, ChatGPT, and Gemini differ most are:

- Code generation Claude scores 94.6% HumanEval+ for clean one-shot generation, GPT-5.4 scores 95.1% with support across 200+ programming languages, and Gemini scores 92.3% with strong Python output

- Debugging Claude identifies subtle bugs through Thinking Mode step-by-step reasoning, GPT-5.4 recovers fastest from ambiguous instructions, and Gemini leverages Google Cloud integration for environment-specific debugging

- Refactoring Claude produces the most idiomatic type-safe code with proper generics and JSDoc comments, GPT-5.4 executes large-scale edits through Computer Use, and Gemini handles the largest codebase context

- Terminal execution GPT-5.4 dominates with 75.1% Terminal-Bench 2.0 for CI/CD debugging and Git operations, Claude scores 65.4%, and Gemini scores 68.5%

- Agentic coding Claude Code navigates codebases and manages PRs autonomously from the terminal, GPT-5.4 automates UI-based tasks at 75% OSWorld, and Gemini Code Assist provides native GCP co-pilot integration

| Coding Capability | Claude Opus 4.6 | GPT-5.4 | Gemini 3.1 Pro |

| SWE-bench Verified | 80.8% | 80.1% | 68.3% |

| HumanEval+ | 94.6% | 95.1% | 92.3% |

| Terminal-Bench 2.0 | 65.4% | 75.1% | 68.5% |

| Codebase Context | 1M tokens | 128k–512k | 1–2M tokens |

For a dedicated agentic coding comparison, read Claude Code vs Cursor or Cursor vs Copilot.

Which AI Model Debugs and Refactors Code Most Effectively?

Claude Opus 4.6 debugs complex architectural tasks most effectively. GPT-5.4 refactors most effectively for terminal-heavy execution tasks.

Real debugging test buggy Python loop:

- Claude refactored error-free in one pass with full JSDoc documentation and strict TypeScript adherence

- GPT-5.4 iterated twice but recovered fastest with clear error explanations

- Gemini identified the surface bug but produced less strict output without proper generics

| Refactoring Feature | Claude Opus 4.6 | GPT-5.4 | Gemini 3.1 Pro |

| Subtle Bug Detection | Best Thinking Mode | Good | Moderate |

| Code Cleanliness | Highest idiomatic | High | Functional |

| Explanation Quality | Best step-by-step | Excellent | Good |

| Recovery Speed | Moderate | Fastest | Moderate |

Is Claude Better Than ChatGPT for Technical and Complex Coding Tasks?

Claude Opus 4.6 is better than GPT-5.4 for technical coding tasks requiring multi-step architectural reasoning. GPT-5.4 is better for computer use and UI automation tasks.

For a full OpenAI model generation comparison, see GPT-4o vs GPT-4.1.

| Technical Task | Claude Opus 4.6 | GPT-5.4 | Gemini 3.1 Pro |

| Algorithmic Logic | Leading accuracy | Strong speed | Moderate |

| Multi-file Synthesis | Best architecture | Best execution | Best context |

| API Integration | High adherence | Widest support | Best GCP/Firebase |

| Computer Use | Moderate | 75% OSWorld | Moderate |

The most effective 2026 developer routing policy applies Claude for initial architectural planning and GPT-5.4 for rapid prototyping and terminal-heavy execution.

Claude vs ChatGPT vs Gemini for Reasoning: Which AI Model Is Most Accurate?

Claude Opus 4.6 leads in scientific reasoning and hallucination control. GPT-5.4 leads in broad knowledge accuracy. Gemini 3.1 Pro leads in abstract reasoning and facts grounding.

| Reasoning Benchmark | Claude Opus 4.6 | GPT-5.4 | Gemini 3.1 Pro |

| GPQA Diamond | 87.4%–94.1% | 83.9%–92.8% | 82.1% |

| MMLU-Pro | 91.7% | 92.3% | 90.8% |

| ARC-AGI-2 | 68.8% | 73.3% | 77.1% |

| Math-500 | 97.1% | 96.8% | 95.9% |

| Hallucination Rate | ~3% | 8%–12% | 10%–15% |

Which AI Model Has the Lowest Hallucination Rate?

Claude Opus 4.6 has the lowest hallucination rate at ~3%, achieved through Constitutional AI self-critique evaluating outputs against written ethical principles before delivery.

The 3 hallucination rate differences between Claude, ChatGPT, and Gemini are:

- Factual consistency Claude scores 98.2% factual consistency per LMSYS Chatbot Arena independent evaluation, GPT-5.4 scores 96.9%, and Gemini scores 89.6%

- TruthfulQA performance Claude scores 78.9%, GPT-5.4 scores 77.2%, and Gemini scores 76.8%

- Confidence calibration Claude pushes back on flawed premises more consistently than GPT-5.4, which optimizes for engagement over truth in borderline cases per LMSYS Arena human evaluation data

Claude is the preferred model for healthcare, finance, and legal sectors where a single hallucination carries reputational and legal risk.

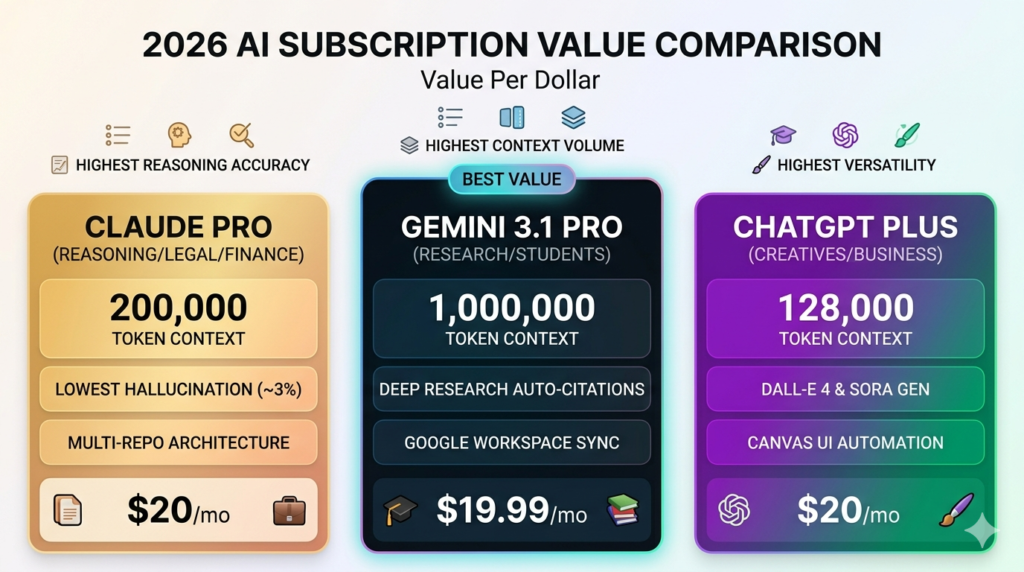

Claude vs ChatGPT vs Gemini Pricing: Which AI Subscription Offers the Best Value?

Google AI Pro at $19.99/month offers the best value for researchers and students. Claude Pro and ChatGPT Plus both cost $20/month with distinct feature sets.

| Subscription Plan | Cost/Month | Key Feature | Context Limit |

| ChatGPT Plus | $20.00 | GPT-5.4 + DALL-E 4 + Sora | 128,000 tokens |

| Claude Pro | $20.00 | Reasoning + Claude Cowork | 200,000 tokens |

| Google AI Pro | $19.99 | Deep Research + Workspace | 1,000,000 tokens |

| ChatGPT Pro | $200.00 | Priority access + high limits | 272,000–1M tokens |

| Claude Max 20x | $200.00 | 20x usage for developers | 1,000,000 tokens |

| Google AI Ultra | $249.99 | Veo 3 + 30TB storage | 2,000,000 tokens |

The 3 deciding factors between Claude Pro and ChatGPT Plus at $20/month are:

- Multimodal output ChatGPT Plus generates images via DALL-E 4 and videos via Sora, Claude Pro analyzes images but does not generate them

- Reasoning accuracy Claude Pro produces hallucinations in ~3% of outputs versus ChatGPT Plus at 8%–12%

- Context window Claude Pro provides 200,000 tokens versus ChatGPT Plus at 128,000 tokens at standard tier

API pricing for developers:

| Model | Input Cost per 1M Tokens | Output Cost per 1M Tokens |

| Gemini 3.1 Pro | $2.00 | $12.00 |

| GPT-5.4 | $12.00 | $60.00 |

| Claude Opus 4.6 | $15.00 | $75.00 |

If the $20/month plans do not match your needs, explore ChatGPT Alternatives, Claude Alternatives, or Gemini Alternatives.

Claude vs ChatGPT vs Gemini Context Window: Which AI Handles Longer Documents?

Gemini 3.1 Pro handles the longest documents with a 2-million-token context window. Claude Opus 4.6 handles documents with the highest synthesis accuracy within 1 million tokens. GPT-5.4 handles documents within 128,000–512,000 tokens.

The 3 document handling differences are:

- Raw capacity Gemini supports entire legal libraries, full GitHub repositories, and hour-long video transcripts in one prompt, Claude supports 750,000-word documents with highest accuracy, and GPT-5.4 supports 96,000–384,000-word documents

- Synthesis fidelity Claude leads in attribution precision and inter-document connection accuracy, Gemini leads in raw volume retrieval, and GPT-5.4 shows instruction drift in very long conversations

- Cost efficiency Gemini costs $2.00 per 1 million tokens versus Claude’s $15.00, making Gemini the pragmatic choice for bulk document processing

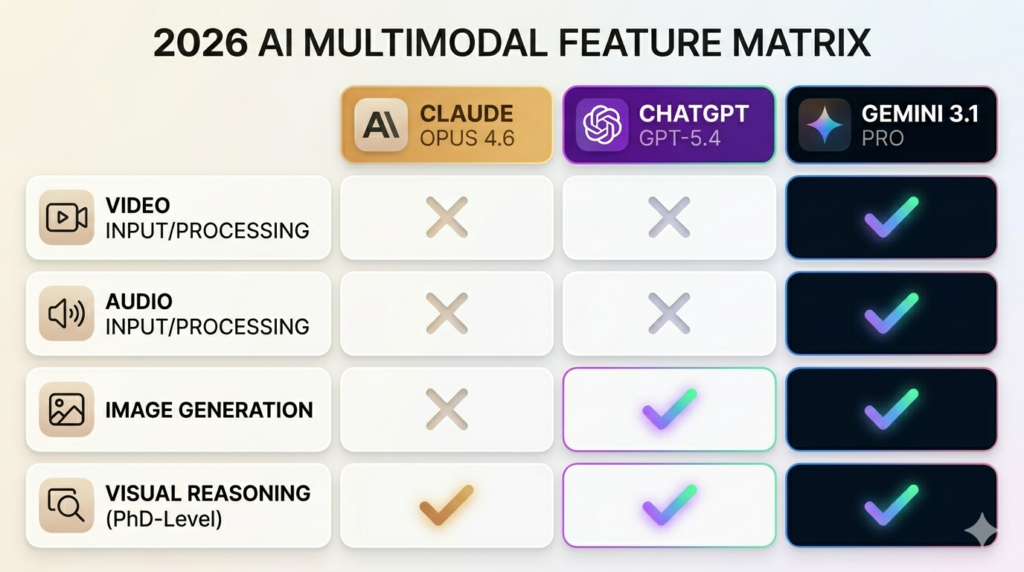

Claude vs ChatGPT vs Gemini for Multimodal Tasks: Which AI Processes Images and Data Better?

Gemini 3.1 Pro leads multimodal processing with native video, audio, and image understanding at 78.2% Video-MME. ChatGPT GPT-5.4 leads multimodal output generation with DALL-E 4 and Sora. Claude Opus 4.6 leads image analysis accuracy at 68.8% ARC-AGI-2 visual reasoning but does not generate images or process video natively.

| Multimodal Feature | Claude Opus 4.6 | GPT-5.4 | Gemini 3.1 Pro |

| Image Analysis | Yes PhD precision | Yes | Yes |

| Image Generation | No | Yes DALL-E 4 | Yes Imagen 3 |

| Video Processing | No | No converter needed | Yes native |

| Audio Processing | No transcription | No transcription | Yes native |

| Visual Reasoning | 68.8% ARC-AGI-2 | 52.9% | 31.0% |

| Video Understanding | N/A | N/A | 78.2% Video-MME |

For a full multimodal comparison between the top 2 models, read Gemini vs ChatGPT.

Claude vs ChatGPT vs Gemini for AI Safety: Which Model Follows Ethical Guidelines Most Strictly?

Anthropic’s Claude follows the strictest ethical guidelines through Constitutional AI a 4-tier priority hierarchy placing broad safety above helpfulness. Per LMSYS Chatbot Arena evaluation data, Claude refuses approximately 20% more high-risk prompts than ChatGPT and Gemini.

| Safety Strategy | Anthropic Claude | OpenAI ChatGPT | Google Gemini |

| Methodology | Constitutional AI (RLAIF) | RLHF + Model Spec | Responsible AI Principles |

| Bias Mitigation | Self-critique + constitutions | Human feedback + audit | Data diversification |

| Rejection Rate | Highest safety-first | Moderate balanced | Moderate |

| Transparency | Documented constitution | Opaque RLHF weights | Research reporting |

Anthropic’s Constitutional AI 4-tier hierarchy ranks: broadly safe first, broadly ethical second, compliant with guidelines third, and genuinely helpful fourth. This makes Claude the preferred model for high-accountability teams in legal, finance, and medical sectors.

Claude vs ChatGPT vs Gemini: Which AI Is Best for Developers, Writers, and Researchers?

Claude Opus 4.6 is best for developers, ChatGPT GPT-5.4 is best for content writers and marketers, and Gemini 3.1 Pro is best for researchers and data analysts.

The 4 professional personas and their optimal AI model assignments in 2026 are:

- Developer → Claude Opus 4.6 architectural refactoring, complex reasoning, autonomous PR management via Claude Code terminal tool

- Content writer → GPT-5.4 marketing copy, creative brainstorming, iterative editing via Canvas interface

- Researcher → Gemini 3.1 Pro web-scale synthesis, multimodal data analysis, Deep Research automated citations

- IT administrator → GPT-5.4 terminal execution at 75.1% Terminal-Bench 2.0, computer use, CI/CD automation

| Professional Persona | Recommended Model | Primary Value | Key Feature |

| Developer | Claude Opus 4.6 | Code architecture + reasoning | Claude Code terminal |

| Content Writer | GPT-5.4 | Marketing copy + creativity | Canvas interface |

| Researcher | Gemini 3.1 Pro | Web synthesis + multimodal | Deep Research |

| IT Administrator | GPT-5.4 | Terminal + computer use | 75.1% Terminal-Bench |

What Reddit communities report about Claude vs ChatGPT vs Gemini in 2026:

Reddit communities on r/ChatGPT, r/ClaudeAI, and r/Gemini consistently report 3 real-world usage patterns:

- Developers switch to Claude after experiencing GPT-5.4 producing plausible but architecturally flawed multi-file code in complex projects

- Writers retain ChatGPT Plus for Canvas-based iterative editing despite Claude producing more natural prose

- Students and researchers migrate to Google AI Pro for Deep Research after finding Perplexity Pro redundant at the same price point

Claude vs ChatGPT vs Gemini vs Other AI Models: How Do Grok, DeepSeek, Copilot, and Perplexity Compare?

Grok 4 leads in real-time trending data, DeepSeek V3.2 leads in open-source cost efficiency, Microsoft Copilot leads in Microsoft 365 workplace automation, and Perplexity Pro leads in sourced fact-checking at 99.95% answer success rate.

| Specialist Model | Developer | 2026 Differentiator | Best For |

| Grok 4 | xAI | Real-time X data + 40% faster speed | Coding + trending topics |

| DeepSeek V3.2 | DeepSeek AI | Open weights + lowest cost | Budget developers |

| Microsoft Copilot | Microsoft | Native 365 + Azure Teams summaries in under 30 seconds | Workplace automation |

| Perplexity Pro | Perplexity AI | 99.95% sourced accuracy | Research + journalism |

Read the full dedicated comparisons: Grok vs Claude · DeepSeek vs ChatGPT · Copilot vs ChatGPT · Perplexity vs ChatGPT.

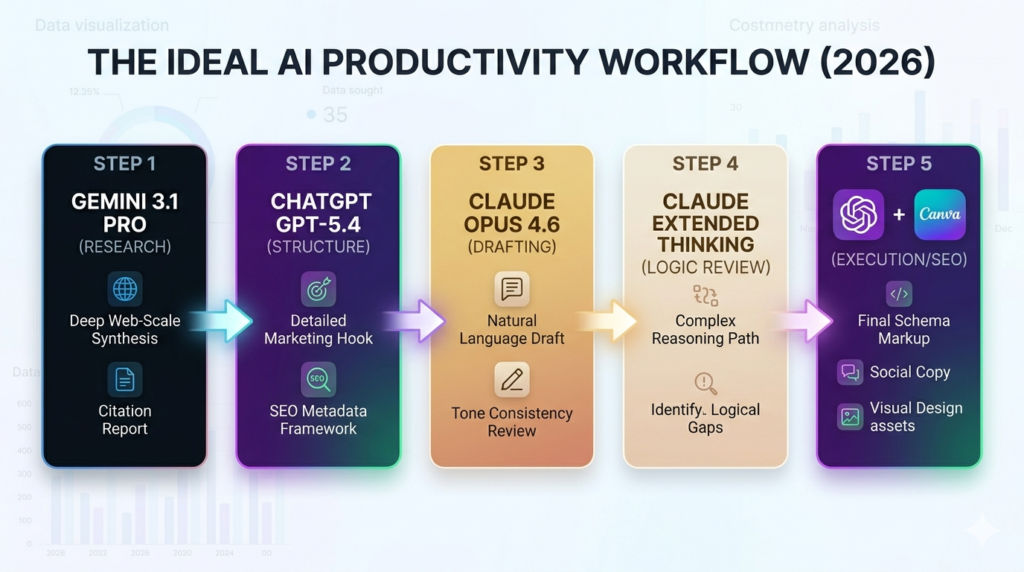

How to Use Claude, ChatGPT, and Gemini Together for Maximum Productivity?

Claude, ChatGPT, and Gemini together produce 5–7 hours of weekly productivity savings when routed by task type, per AiZolo multi-model workspace study 2026.

The 5-step productivity workflow for Claude, ChatGPT, and Gemini is:

- Fact-gathering Gemini 3.1 Pro Use Deep Research to synthesize dozens of live web sources and generate a verified citation report as the research foundation

- Ideation and outlining ChatGPT GPT-5.4 Feed the Gemini research report into ChatGPT to generate structural outlines, marketing hooks, and SEO metadata frameworks

- Drafting Claude Opus 4.6 Move the outline to Claude for natural language drafting with tone consistency and Constitutional AI self-critique applied automatically

- Logical review Claude extended thinking Run the draft through Claude’s extended thinking mode to identify logical gaps, factual contradictions, and counterarguments

- SEO execution ChatGPT + Canva Use ChatGPT for final schema markup and social copy, and Canva Magic Studio for visual design assets

This workflow applies across 3 professional use cases: SEO article production, enterprise research reports, and technical documentation. For workflow automation tools that connect these AI models, compare n8n vs Zapier or Make vs Zapier.

Claude vs ChatGPT vs Gemini: Which AI Should You Choose?

There is no universal best AI model in 2026. The optimal choice depends on primary use case, ecosystem integration, and hallucination tolerance.

Choose Claude Opus 4.6 for Reasoning, Coding, and Long Document Analysis

Claude Opus 4.6 is the optimal choice for 4 professional profiles:

- Professional developers performing complex architectural refactoring, multi-repo migrations, and algorithm design requiring multi-step logical verification

- Legal and financial professionals requiring ~3% hallucination rate for contract analysis, compliance documentation, and regulatory reporting

- Technical writers producing long-form documentation maintaining specific voice and structural consistency across 10,000+ word outputs

- High-accountability teams in regulated industries requiring auditable AI decision-making through Anthropic’s published Constitutional AI framework

Choose ChatGPT GPT-5.4 for Versatility, Image Generation, and Broad Use Cases

ChatGPT GPT-5.4 is the optimal choice for 4 professional profiles:

- Content creators and marketers requiring image generation via DALL-E 4, video creation via Sora, and iterative copy refinement through Canvas

- General business users needing the most versatile general-purpose AI model for drafting, summarizing, ideating, and automating repetitive tasks

- IT administrators and DevOps engineers requiring computer use automation and CI/CD pipeline management at 75.1% Terminal-Bench 2.0

- Microsoft ecosystem users needing deep integration with Teams, Word, Outlook, Excel, and Azure DevOps

Choose Gemini 3.1 Pro for Google Workspace and Real-Time Research

Gemini 3.1 Pro is the optimal choice for 4 professional profiles:

- Researchers and academics requiring Deep Research to synthesize dozens of web sources into structured reports with verified citations automatically

- Google Workspace users needing AI integrated directly into Gmail, Docs, Sheets, and Meet without switching applications

- Data analysts and scientists processing native video, audio, satellite imagery, and large datasets requiring the highest multimodal input capability

- Budget-conscious developers running high-volume API workflows where Gemini’s $2.00 per 1 million token pricing is 7.5x cheaper than Claude Opus 4.6