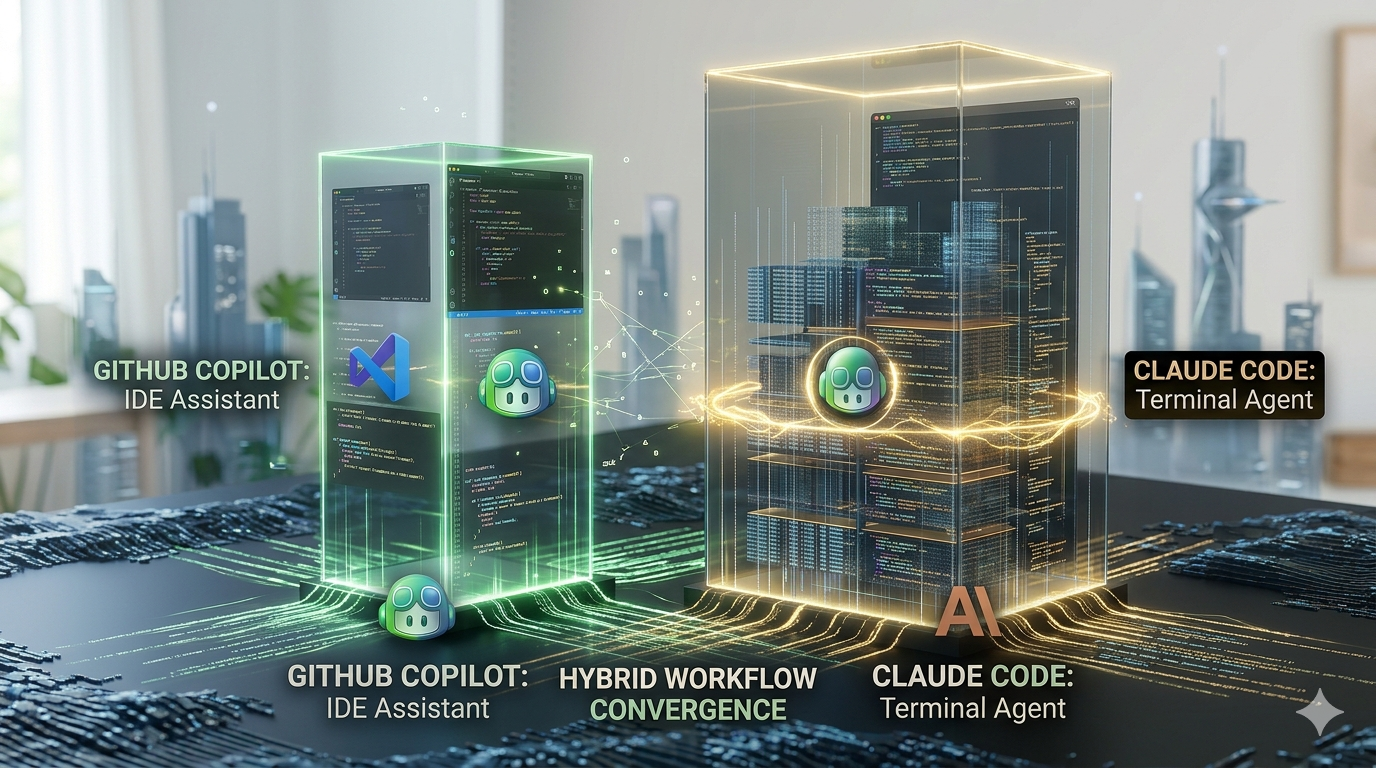

GitHub Copilot vs Claude Code: IDE Assistant vs Terminal Agent in 2026

GitHub Copilot is an IDE-first coding assistant by Microsoft that delivers inline suggestions inside VS Code and 5 other editors. Claude Code is a terminal-first agentic coding tool by Anthropic that autonomously plans and executes multi-file repository changes. Copilot Pro costs $10/month; Claude Code Max 5x costs $100/month.

What Is the Fundamental Difference Between GitHub Copilot and Claude Code?

GitHub Copilot and Claude Code represent 2 distinct AI coding philosophies. Copilot accelerates developer-driven coding inside the IDE; Claude Code autonomously plans and executes developer-assigned tasks from the terminal.

What Is GitHub Copilot as an IDE-Based Coding Assistant by Microsoft?

GitHub Copilot is an AI-powered coding assistant developed by Microsoft and GitHub, launched in 2021. It integrates with 6 major editors: VS Code, Visual Studio, JetBrains IDEs, Neovim, Sublime Text, and Vim.

Copilot delivers 2 core capabilities:

- Inline code completions real-time suggestions that appear during active typing inside the editor.

- Copilot Chat a conversational interface for code explanation, refactoring, and debugging, available in VS Code, JetBrains, and Visual Studio.

Copilot integrates natively with GitHub.com, extending AI assistance to pull requests, issues, and code review workflows. GitHub reports millions of individual users and tens of thousands of business customers, making it the most widely adopted AI developer tool globally.

For a full feature comparison of Copilot against another widely used AI assistant, see Copilot vs ChatGPT.

What Is Claude Code as a Terminal-Based Agentic Coding Tool by Anthropic?

Claude Code is an agentic coding tool developed by Anthropic, released in 2024. It operates from the terminal, reading the full repository structure before planning and executing multi-file changes.

Claude Code processes up to 1 million tokens using Opus 4.6, enabling it to ingest an entire codebase, design documents, and error logs in a single session. It requires human approval at every file change before committing, giving developers full control over each execution step.

3 technical capabilities distinguish Claude Code from IDE-based tools:

- Agent Teams parallel sub-agents with shared task lists and dependency tracking across the full codebase.

- Model Context Protocol (MCP) support direct connections to databases, APIs, and external services during a coding session.

- Autonomous multi-file execution changes spanning entire repositories, demonstrated by the Rakuten case study: 40+ files edited across a 7-hour session with zero human input.

For a broader comparison of Claude’s AI capabilities, see Claude vs ChatGPT.

GitHub Copilot vs Claude Code: 7 Technical Feature Differences

The 7 technical differences between GitHub Copilot and Claude Code span context window capacity, IDE integration depth, agent architecture, MCP connectivity, model selection, maximum output volume, and benchmark performance.

| Feature | GitHub Copilot | Claude Code |

| Context window | 32,000–200,000 tokens (model-dependent) | 1,000,000 tokens (Opus 4.6) |

| IDE support | 6 editors: VS Code, Visual Studio, JetBrains, Neovim, Sublime Text, Vim | Terminal-first; works alongside any editor via shell |

| Agent mode | Copilot Agent Mode (2026); IDE-bound; limited beyond 10 simultaneous files | Agent Teams: parallel sub-agents with shared task lists and dependency tracking |

| MCP integration | Not natively supported | Natively supported connects to PostgreSQL, Redis, and CI/CD systems |

| Model options | GPT-4o (default); Claude Sonnet 4.6 and Opus 4.6 via multi-model selector | Claude Haiku, Sonnet 4.6, and Opus 4.6 within the Claude Code stack |

| SWE-bench Verified score | Not published | 80.8% using Opus 4.6 |

| Developer productivity metric | 55% faster coding; 75% higher job satisfaction (GitHub research) | 80.8% real GitHub issue resolution rate (SWE-bench Verified) |

How Do Context Windows Differ Between Claude Code and GitHub Copilot?

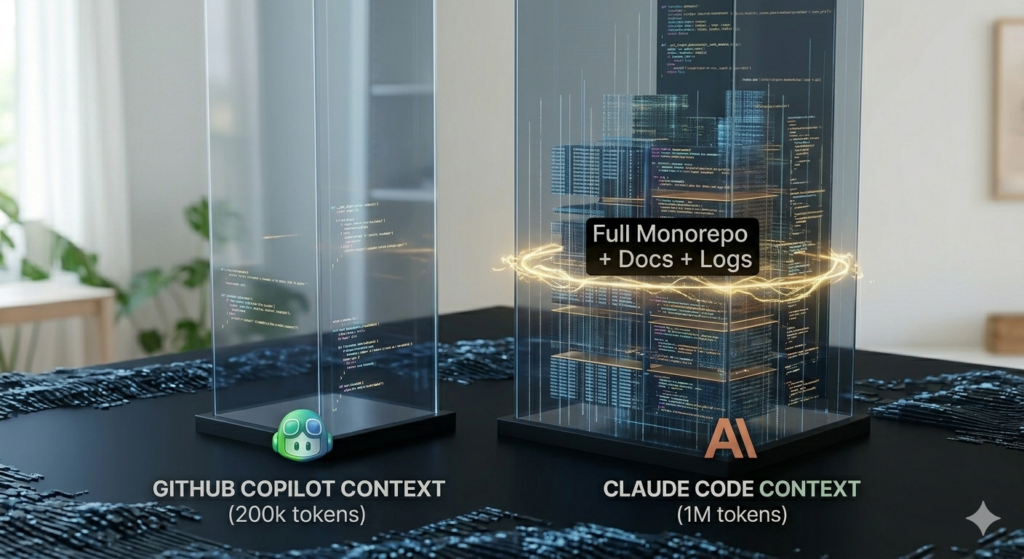

Claude Code processes 1 million tokens using Opus 4.6; GitHub Copilot processes 32,000 to 200,000 tokens depending on the model selected in Copilot Chat or Agent Mode.

| Tool | Context window | Practical scope |

| Claude Code (Opus 4.6) | 1,000,000 tokens | Full monorepo + design docs + error logs in one session |

| GitHub Copilot | 32,000–200,000 tokens | File-level and function-level tasks |

The practical impact of this gap is direct. A 1-million-token context allows Claude Code to process an entire codebase alongside documentation and error logs simultaneously. A 32,000-to-200,000-token range limits Copilot to 1 or 2 files at a time for long-range refactoring tasks.

3 task types require repository-wide context that Copilot’s window does not cover at the same depth:

- Cross-service dependency analysis understanding how API changes in one service affect consuming services.

- Architecture-level migrations restructuring authentication patterns or data models across a full monorepo.

- Large-module test generation generating tests that cover function interactions across an entire file, not just isolated units.

Which IDEs Does GitHub Copilot Support Natively in 2026?

GitHub Copilot integrates natively with 6 major editors in 2026: VS Code, Visual Studio, JetBrains IDEs, Neovim, Sublime Text, and Vim.

The 6 editors with native GitHub Copilot support are:

- Visual Studio Code inline completions and full Copilot Chat interface.

- Visual Studio inline completions and full Copilot Chat interface.

- JetBrains IDEs IntelliJ IDEA, PyCharm, WebStorm, and all JetBrains suite products.

- Neovim inline completions via the official Copilot extension.

- Sublime Text inline completions via the official extension.

- Vim inline completions via the official Copilot extension.

Copilot Chat the conversational refactoring and explanation interface is available in VS Code, JetBrains, and Visual Studio only. Editors outside this group receive inline completions without the full chat interface.

Claude Code does not require an IDE plugin. It operates from the terminal and works alongside any editor through the shell, providing editor flexibility without IDE dependency. For a comparison of IDE-integrated tools, see Cursor vs Copilot.

What Is MCP Support in Claude Code and How Does It Extend Tool Integration?

Model Context Protocol (MCP) is an open standard that enables AI agents to connect directly to external tools, databases, and services during a live development session.

Claude Code natively supports MCP, enabling 3 categories of external tool connections:

- Database access direct queries to PostgreSQL and Redis during a coding session, allowing Claude Code to inspect schemas and adjust generated code based on live data.

- API integration real-time calls to external services without leaving the terminal workflow.

- CI/CD system connections interaction with build pipelines and deployment systems during autonomous task execution.

GitHub Copilot does not natively support MCP. Copilot’s integrations remain editor-bound and GitHub-ecosystem-centric, relying on IDE plugins and the GitHub API rather than the MCP standard.

A direct example of MCP in practice: Claude Code connects to a PostgreSQL database, runs a query to inspect the schema, generates migration code based on the live data structure, and executes the changes across the affected files without exiting the terminal.

GitHub Copilot vs Claude Code: SWE-bench and Aider Polyglot Benchmark Results

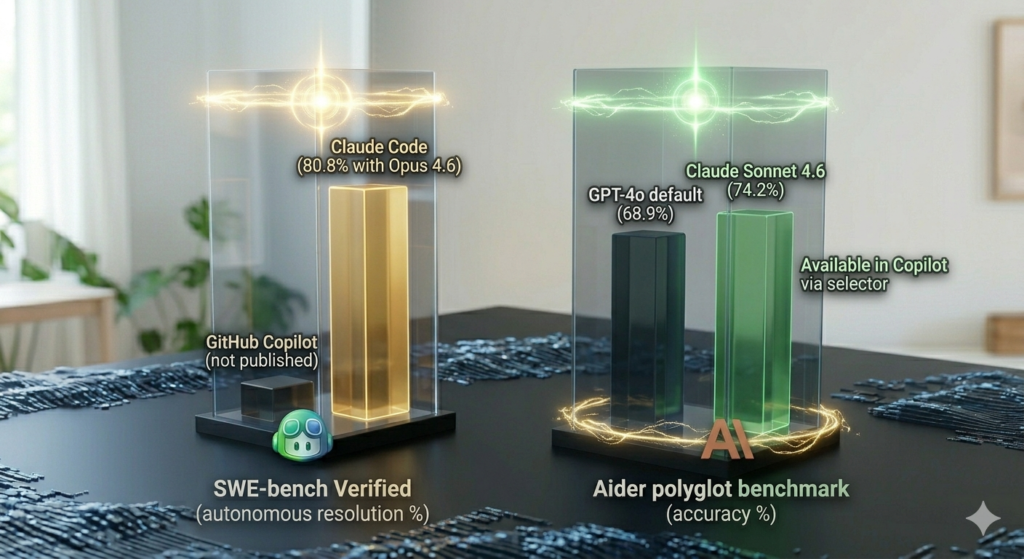

The 2 benchmark types used in 2026 measure fundamentally different outcomes. SWE-bench Verified measures autonomous real-world issue resolution. The Aider polyglot benchmark measures multi-language code generation accuracy. Developer productivity surveys measure perceived speed and satisfaction. These 3 benchmarks answer 3 different questions direct comparison across them produces no valid conclusion.

| Benchmark type | GitHub Copilot (2026) | Claude Code (2026) |

| SWE-bench Verified | Not published | 80.8% (Opus 4.6) |

| Aider polyglot | 68.9% (GPT-4o default) | 74.2% (Claude Sonnet 4.6) |

| Developer productivity survey | 55% faster coding; 75% higher satisfaction | 89% branch coverage on full-module test generation |

What SWE-bench Verified Score Does Claude Code Achieve with Opus 4.6 in 2026?

Claude Code achieves 80.8% on SWE-bench Verified using Opus 4.6 the highest published score for any coding agent in 2026. GitHub Copilot does not publish an equivalent autonomous benchmark score.

SWE-bench Verified tests AI tools against real open-source GitHub issues. Each issue requires multi-file edits, test generation, and dependency-aware code changes. A score of 80.8% means Claude Code autonomously resolves 80.8 out of 100 real-world engineering tasks without human intervention during execution.

GitHub Copilot’s published metrics measure a different outcome: inline coding speed and developer satisfaction, not autonomous task completion. The 2 benchmarks are not comparable they measure fundamentally different tool capabilities for fundamentally different use cases.

What Score Do Claude Sonnet 4.6 and GPT-4o Achieve on the Aider Polyglot Benchmark?

Claude Sonnet 4.6 scores 74.2% on the Aider polyglot benchmark; GPT-4o scores 68.9% on the same benchmark a 5.3 percentage point gap across 7 languages: Python, JavaScript, TypeScript, Go, Rust, Java, and C++.

One critical nuance closes this gap for Copilot users. GitHub Copilot Pro supports Claude Sonnet 4.6 via the multi-model selector inside Copilot Chat and Agent Mode. Copilot users who select Claude Sonnet 4.6 access the same 74.2% polyglot performance as Claude Code Pro users. The gap exists only when Copilot operates on its default GPT-4o model.

What Developer Productivity Improvement Does GitHub Copilot Produce?

GitHub Copilot produces a 55% improvement in task completion speed and a 75% increase in job satisfaction, according to GitHub’s published research.

These 2 metrics measure inline coding productivity the speed at which developers complete editor-based tasks when Copilot provides active real-time suggestions. They do not measure autonomous task completion, repository-level reasoning, or multi-file execution accuracy.

The distinction matters directly when comparing Copilot against Claude Code. Copilot’s 55% speed improvement applies to routine daily coding tasks: writing functions, generating boilerplate, and completing test stubs. Claude Code’s 80.8% SWE-bench score applies to autonomous resolution of complex engineering issues across multiple files with no developer intervention.

GitHub Copilot Agent Mode vs Claude Code Agent Teams: 4 Agentic Differences

Both GitHub Copilot and Claude Code introduced agent capabilities in 2025–2026, but they operate on different architectures. Copilot Agent Mode is IDE-bound with limited cross-file scope. Claude Code Agent Teams are terminal-based with full-repository autonomy and parallel sub-agent coordination. The 4 agentic differences are: execution environment, cross-file scope, coordination model, and review architecture.

What Tasks Does GitHub Copilot Agent Mode Execute Autonomously Since February 2026?

GitHub Copilot Agent Mode, which reached general availability in 2026, executes 4 autonomous task types inside VS Code: feature branch creation, code implementation, test writing, and pull request opening.

The 4 autonomous tasks Copilot Agent Mode executes are:

- Feature branch creation opens a new Git branch for the assigned task before writing any code.

- Code implementation writes the described feature across affected files within the active VS Code session.

- Test writing generates unit tests for the implemented code after completing the implementation step.

- Pull request opening creates a GitHub PR with a generated title and description after task completion.

Copilot Agent Mode iterates and self-corrects errors autonomously without stopping for human review between steps. Developer reports consistently identify 1 documented limitation: Copilot Agent Mode struggles with changes spanning more than 10 files simultaneously, requiring developers to split large refactors into manual batches.

How Do Claude Code Agent Teams Coordinate Parallel Sub-Agents Across a Codebase?

Claude Code Agent Teams coordinate parallel sub-agents using 3 coordination components: a shared task list, dependency tracking, and a dedicated context window per sub-agent.

The 3 coordination components function as follows:

- Shared task list all sub-agents read from and write to a single task list, preventing duplicate edits and maintaining a real-time view of overall session progress.

- Dependency tracking the system sequences changes in dependency order, ensuring services that depend on modified code are updated only after their dependencies are resolved.

- Dedicated context window per sub-agent each sub-agent receives its own full context window, preventing context contamination across parallel workstreams running on different parts of the codebase.

The Rakuten case study demonstrates this architecture at production scale. A Claude Code session edited 40+ files across a 7-hour period with zero human input, completing a deprecated API migration in correct dependency order across an entire production codebase. For a comparison of Claude Code against another terminal-capable agentic tool, see Claude Code vs Cursor.

How Does Human-in-the-Loop Review in Claude Code Differ from Copilot’s Self-Healing Approach?

Claude Code requires explicit developer approval before committing every file change. GitHub Copilot Agent Mode iterates and self-corrects errors without requiring approval between individual steps.

The 2 review architectures produce 4 measurable differences:

| Dimension | GitHub Copilot Agent Mode | Claude Code |

| Approval requirement | None between steps agent iterates continuously | Required before every file change is committed |

| Error handling | Self-heals autonomously during iteration | Surfaces errors for developer review before proceeding |

| Control level | Lower agent drives full session | Higher developer approves each file-level change |

| Best fit | Low-risk scoped tasks (features, tests) | High-risk refactors (migrations, architecture changes) |

Claude Code’s stop-for-approval model suits complex refactors where each file change carries production risk. Copilot Agent Mode’s self-healing model suits lower-risk tasks where speed is the primary requirement, such as generating a test suite for a scoped feature branch.

GitHub Copilot Pricing vs Claude Code Pricing: Plans from $0 to $200 per Month

GitHub Copilot and Claude Code offer 8 total plans across 4 tiers each, spanning from $0 to enterprise pricing. The pricing gap reflects the tools’ different use cases: Copilot targets everyday IDE-integrated coding; Claude Code targets autonomous complex-task execution requiring sustained high-context sessions.

What Are GitHub Copilot’s 4 Pricing Plans and Their Monthly Costs in 2026?

GitHub Copilot offers 4 pricing plans in 2026, ranging from $0 to enterprise tier, each adding model access, premium request volume, and governance capabilities.

The 4 GitHub Copilot plans are:

- Copilot Free $0/month: 2,000 code completions per month; 50 premium requests; basic inline completions; limited model access. Suits light personal projects and evaluation.

- Copilot Pro $10/month: Unlimited code completions; 300 premium requests per month; access to all available models including Claude Sonnet 4.6 and Opus 4.6; Copilot Agent Mode access.

- Copilot Pro+ $19/month (verify current pricing at github.com/features/copilot/plans): Larger premium request allowance; higher-rate model access; GitHub Spark access included.

- Copilot Enterprise $39/user/month: Centralized policy controls; audit logs; EU data residency; codebase-wide repository indexing; custom private model support; license assignment across the organization.

Premium requests consumed by Agent Mode, Copilot Chat, code review, and CLI features incur overage charges of $0.04 per request beyond the monthly plan allowance on Pro and Pro+ tiers.

What Are Claude Code’s 4 Pricing Plans and Their Monthly Costs in 2026?

Claude Code offers 4 pricing tiers in 2026, designed for developers ranging from occasional complex-task users to engineers running agentic coding sessions 6+ hours daily.

The 4 Claude Code plans are:

- Claude Code Free: Limited access; not prominently featured as a consumer entry point. The Pro plan at $20/month is the practical starting tier for regular use.

- Claude Code Pro $20/month: Access to core agentic features including Agent Teams and MCP integrations; rate-limited at approximately 44,000 tokens per 5-hour window; Claude Sonnet 4.6 as the default model.

- Claude Code Max 5x $100/month: No per-request metering on standard operations; full access to Opus 4.6 with 1-million-token context; designed for developers using AI coding tools 6+ hours daily.

- Claude Code Max 20x: Highest tier for large engineering teams and heavy concurrent agent workloads. (Verify current pricing at anthropic.com figures vary across published sources.)

The Pro plan’s rate limit is the most consequential constraint for daily users. At approximately 44,000 tokens per 5-hour window, a single large refactoring session consumes the full allowance. Developer reports confirm that Max 5x at $100/month is the practical minimum for sustained daily agentic use.

Copilot Pro at $10 vs Claude Code Pro at $20: Which Plan Suits Which Developer Workflow?

Copilot Pro at $10/month suits developers who need unlimited inline completions for daily editor-based coding. Claude Code Pro at $20/month suits developers who need occasional autonomous multi-file task execution on complex projects.

The 2 Pro plans serve different workflows despite a $10/month price difference:

| Dimension | Copilot Pro ($10/month) | Claude Code Pro ($20/month) |

| Primary use | Daily inline completions inside VS Code or JetBrains | Occasional autonomous multi-file refactoring sessions |

| Completion volume | Unlimited code completions | Rate-limited (~44,000 tokens per 5-hour window) |

| Model access | Claude Sonnet 4.6, Opus 4.6, GPT-4o via multi-model selector | Claude Sonnet 4.6 default; Opus 4.6 at higher tier |

| Agent capability | Copilot Agent Mode (limited beyond 10 files) | Agent Teams with full-repository parallel execution |

| Best fit | Budget-first developers needing daily IDE speed | Complex-project developers needing agentic depth occasionally |

Claude Code Pro’s rate limit makes it unsuitable as a daily driver for engineers running multiple long agentic sessions per day. Developers requiring sustained agentic execution across multi-hour sessions need Claude Code Max 5x at $100/month. For pricing comparisons that include Cursor’s plan structure alongside both tools, see Cursor vs Copilot.

GitHub Copilot vs Claude Code: Which Tool Fits 3 Developer Profiles?

Tool selection in 2026 depends on 3 criteria: workflow environment, codebase size, and monthly budget. The 3 developer profiles below assign each tool based on these 3 criteria using documented capabilities and confirmed use-case data.

Which Tool Do Beginner Developers Use for Daily Coding in VS Code?

Beginner developers use GitHub Copilot for daily coding it installs as a VS Code extension with zero terminal configuration and provides inline suggestions during active editing at $0/month on the Free plan.

3 attributes make GitHub Copilot the correct tool for beginner developers:

- Zero-friction setup the VS Code extension installs in under 2 minutes with no terminal commands, shell configuration, or repository initialization required before seeing the first suggestion.

- Free entry point Copilot Free at $0/month provides 2,000 completions and 50 premium requests, sufficient for learning workflows and small personal projects without financial commitment.

- Immediate in-editor feedback suggestions appear inline as the developer types, providing context-aware assistance visible directly in the active file without context switching to a terminal.

Claude Code requires terminal proficiency, shell navigation, and sufficient understanding of repository structure to assign meaningful tasks to an agent. These prerequisites make Claude Code unsuitable for developers still developing foundational coding skills. For beginner-accessible AI coding tool comparisons, see Copilot vs ChatGPT.

Which Tool Do Senior Developers Use for Large Codebase Refactoring?

Senior developers working on large codebases use Claude Code its 1-million-token context window processes entire monorepos in a single session, enabling architecture-level changes that exceed Copilot’s context range.

3 capabilities drive Claude Code adoption among senior developers:

- 1-million-token context window ingests the full codebase, design documentation, and error logs simultaneously in one session, enabling cross-service dependency reasoning that Copilot’s 32,000-to-200,000-token range does not support at the same depth.

- Agent Teams with dependency tracking parallel sub-agents sequence changes across modules in dependency order, eliminating the manual batching that Copilot Agent Mode requires when changes span more than 10 files.

- MCP integrations direct connections to PostgreSQL, Redis, and CI/CD systems allow data-aware code generation based on live schema or pipeline state without leaving the terminal workflow.

The Rakuten case study confirms this at production scale: a Claude Code session edited 40+ files across 7 hours with zero human input, completing a deprecated API surface migration in correct dependency order. For a direct comparison of Claude Code against another terminal-capable agentic tool, see Claude Code vs Cursor.

Which Tool Do Development Teams Use for Enterprise-Scale Coding Workflows?

Development teams use GitHub Copilot Business and Enterprise for enterprise-scale workflows these 2 plans provide the 4 governance and compliance capabilities that enterprise engineering organisations require.

The 4 enterprise-exclusive capabilities in Copilot Business and Enterprise are:

- Centralised policy controls administrators configure content filters, feature availability, and model access across all licensed developers from a single management interface without per-user configuration.

- Audit logs all Copilot usage activity is recorded, timestamped, and exportable for compliance reporting, security review, and developer productivity analysis.

- EU data residency customer data storage restricted to European Union regions for organisations subject to GDPR and equivalent data sovereignty regulations.

- Codebase-wide indexing Enterprise plan indexes the organisation’s private repositories, enabling Copilot suggestions to reference internal naming conventions, proprietary patterns, and prior implementations.

GitHub reports tens of thousands of business customers on enterprise tiers, with a mature SLA structure, an established enterprise support model, and a tooling ecosystem that extends across the full GitHub platform.

How to Use GitHub Copilot and Claude Code Together in One Development Stack

Advanced engineering teams in 2026 use GitHub Copilot and Claude Code as 2 complementary layers in one development stack not as competing alternatives. Copilot handles the speed layer: low-latency inline assistance during active coding. Claude Code handles the depth layer: autonomous execution of complex multi-file tasks. The combined monthly cost is $110/month per developer on Copilot Pro ($10) and Claude Code Max 5x ($100).

What Daily Tasks Belong to GitHub Copilot in a Combined AI Development Workflow?

GitHub Copilot handles 4 daily task types in a hybrid AI development stack: inline code completions, commit message generation, pull request assistance, and quick Copilot Chat queries.

The 4 daily Copilot tasks in a hybrid stack are:

- Inline completions real-time function, class, and boilerplate suggestions inside VS Code or JetBrains during active editing, with sub-second response latency.

- Commit message generation AI-generated commit messages based on staged diff contents, produced directly inside the IDE without leaving the editor.

- Pull request assistance PR title generation, description writing, and inline code review comments integrated natively into GitHub.com pull request workflows.

- Quick Copilot Chat queries in-editor explanations, isolated function refactors, and targeted debugging assistance on specific code blocks without switching to a terminal session.

These 4 tasks share 3 common requirements: low response latency, IDE-native execution, and no need for full-repository context. None of them require the 1-million-token context, Agent Teams coordination, or MCP integrations that Claude Code provides assigning them to Claude Code adds cost and latency without proportional benefit.

What Complex Tasks Belong to Claude Code in a Combined AI Development Workflow?

Claude Code handles 3 complex task types in a hybrid AI development stack: large-scale multi-file refactoring, full-module test generation, and architecture-level migrations with Agent Teams coordination.

The 3 complex Claude Code tasks in a hybrid stack are:

- Large-scale multi-file refactoring restructuring API contracts, authentication patterns, or data models across an entire monorepo including all consuming services, affected tests, and dependent configuration files.

- Full-module test generation generating test suites covering complete module behaviour including edge cases that require understanding function interactions across the entire file. In a documented benchmark, Claude Code generated 147 tests covering 89% of branches on a 3,000-line Python module; GitHub Copilot generated 92 tests covering 71% of the same module.

- Architecture migrations with dependency tracking migrating deprecated APIs, restructuring service boundaries, or updating framework versions across a codebase in the correct execution order using Agent Teams parallel sub-agent coordination.

These 3 tasks share 1 common requirement: repository-wide context. Copilot’s 32,000-to-200,000-token context window does not support the cross-file reasoning these tasks demand at the same completion depth and accuracy. For a comparison of Claude Code against other agentic tools in this category, see Claude Code vs Cursor.

GitHub Copilot vs Claude Code: 5 Decision Criteria and Frequently Asked Questions

The final choice between GitHub Copilot and Claude Code depends on 5 decision criteria: use case type, monthly budget, IDE workflow dependency, codebase size, and autonomy requirement. The 2 decision blocks below assign each tool based on these 5 criteria using confirmed specifications.

| Decision criterion | Choose GitHub Copilot | Choose Claude Code |

| Use case | Daily inline coding tasks | Complex multi-file autonomous execution |

| Monthly budget | $10/month (Pro) | $100/month (Max 5x for daily use) |

| Workflow environment | IDE-first (VS Code, JetBrains) | Terminal-comfortable developers |

| Codebase size | File-level and function-level scope | Full monorepo with cross-service dependencies |

| Autonomy requirement | Developer-in-the-loop line by line | Developer-assigned tasks reviewed at diff stage |

Use GitHub Copilot for Inline Coding Speed and Multi-Model Access at $10 per Month, if Budget and IDE Integration Are the Priority

GitHub Copilot Pro at $10/month delivers unlimited inline completions, Copilot Agent Mode access, and multi-model selection including Claude Sonnet 4.6 and Opus 4.6 all without terminal configuration.

4 developer profiles where GitHub Copilot is the confirmed correct choice:

- Budget-constrained developers Copilot Pro provides the broadest feature set at the lowest price point of any AI coding assistant with agent capabilities in 2026.

- IDE-first workflow developers developers whose full workflow lives inside VS Code, JetBrains, or Visual Studio and who do not require terminal-based autonomous execution.

- Daily routine coding task developers writing controllers, DTOs, boilerplate, and unit tests where typing speed and in-editor UX are the primary productivity drivers.

- GitHub-ecosystem teams organisations using GitHub for PRs, issues, and Actions who benefit from Copilot’s native GitHub.com integration across the full development lifecycle.

Copilot Pro users who select Claude Sonnet 4.6 in the multi-model selector access the same 74.2% Aider polyglot performance as Claude Code Pro users the model quality gap closes at the Pro tier. For a comparison of Copilot’s AI capabilities against Google’s model, see Gemini vs Copilot.

Use Claude Code for Autonomous Execution and 80.8% SWE-bench Accuracy, if Complex Task Completion Is the Priority

Claude Code Max 5x at $100/month delivers 80.8% SWE-bench Verified accuracy, a 1-million-token context window, Agent Teams for parallel sub-agent coordination, and Model Context Protocol support for external tool connections.

4 developer profiles where Claude Code is the confirmed correct choice:

- Large codebase engineers developers working on monorepos requiring cross-service dependency analysis that exceeds Copilot’s 32,000-to-200,000-token context range.

- Complex refactoring task owners architecture-level migrations and API deprecations spanning 10+ files simultaneously, where Copilot Agent Mode’s documented file-count limitation applies.

- Autonomous execution requirement developers developers who assign complete tasks to the AI agent and review diffs at completion, rather than guiding code generation line by line inside an editor.

- MCP-integrated workflow teams engineers whose coding sessions require direct database connections to PostgreSQL, Redis, or CI/CD systems to generate accurate data-aware code.

Claude Code Pro at $20/month suits occasional complex tasks. Claude Code Max 5x at $100/month is the practical minimum tier for developers running sustained daily agentic sessions. For a broader comparison of Claude’s capabilities against other leading AI models, see Claude vs ChatGPT.

Does Claude Code Outperform GitHub Copilot on Software Engineering Benchmarks?

Claude Code scores 80.8% on SWE-bench Verified; GitHub Copilot does not publish an equivalent autonomous benchmark. The 2 tools measure performance through different benchmark types because they are designed for different outcomes.

SWE-bench Verified measures how often an AI resolves real open-source GitHub issues requiring multi-file edits without human intervention. Claude Code achieves 80.8% on this benchmark using Opus 4.6. GitHub Copilot measures productivity through 2 different metrics: developers complete tasks 55% faster and report 75% higher job satisfaction figures that quantify inline coding speed and perceived usefulness, not autonomous issue resolution rate.

Direct performance comparison across these 2 metric types is not valid. Claude Code produces superior scores on autonomous task benchmarks. GitHub Copilot produces documented improvements on developer-in-the-loop productivity metrics. The correct evaluation framework depends entirely on which outcome the development team requires.

Can GitHub Copilot Access Claude Sonnet 4.6 and Opus 4.6 Models?

GitHub Copilot Pro and Pro+ support Claude Sonnet 4.6 and Opus 4.6 via the multi-model selector inside Copilot Chat and Copilot Agent Mode at no additional cost beyond the standard $10/month Pro subscription.

Copilot users who select Claude Sonnet 4.6 in the multi-model selector access the same 74.2% Aider polyglot benchmark performance as Claude Code Pro users. The model performance gap between the 2 tools closes at the Pro tier when Copilot operates on Claude Sonnet 4.6 rather than its default GPT-4o model.

The 2 tools differ in how they deploy these models, not which models they access. Copilot deploys Claude Sonnet 4.6 inside the IDE for inline completions and bounded agent tasks. Claude Code deploys the same model in the terminal for full-repository autonomous execution using 1-million-token context, Agent Teams coordination, and Model Context Protocol integrations that Copilot’s architecture does not provide.